Blender Cloud Rendering: Cách Render Dự Án Trên Render Farm

Tổng quan

Giới thiệu

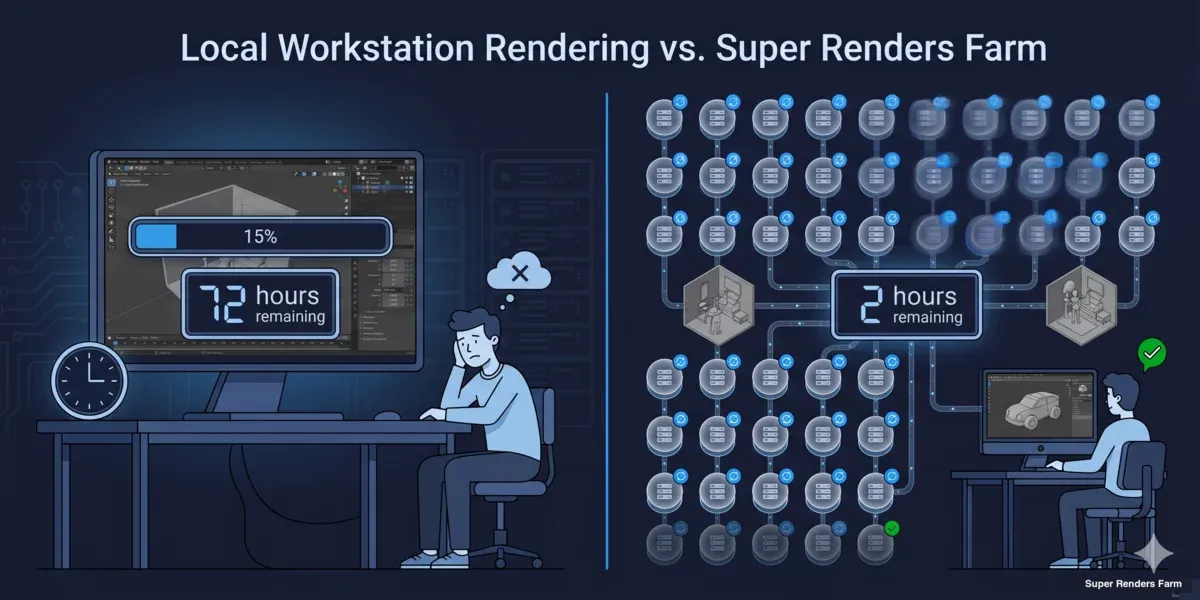

Render một scene Blender phức tạp trên máy tính cá nhân đồng nghĩa với việc máy trạm bị chiếm dụng hàng giờ — đôi khi cả nhiều ngày nếu đang làm animation hoặc ảnh tĩnh độ phân giải cao với volumetrics nặng. Cloud rendering giải quyết vấn đề này bằng cách phân tán quá trình render trên hàng chục đến hàng trăm máy, trả về các frame hoàn chỉnh trong khi bạn vẫn có thể tiếp tục làm việc trên shot tiếp theo.

Chúng tôi render các dự án Blender hàng ngày trên farm. Các dự án trải dài từ ảnh kiến trúc đơn lẻ đến animation nhân vật 10.000 frame, và những câu hỏi mà các artist thường đặt ra đều theo cùng một khuôn mẫu: làm thế nào để chuẩn bị scene, engine nào hoạt động được trên farm, điều gì xảy ra với texture và add-on, và chi phí thực sự là bao nhiêu? Hướng dẫn này sẽ trả lời tất cả những điều đó.

Dù bạn đã từng render trên farm trước đây hay đây là lần đầu tiên rời khỏi máy cá nhân, quy trình vẫn như nhau: chuẩn bị scene, upload lên, cấu hình cài đặt render từ xa, và tải kết quả về. Chi tiết của từng bước mới là điều quan trọng, và đó chính là nội dung được trình bày ở đây.

Tại sao Cloud Rendering phù hợp với Blender

Blender miễn phí, nhưng render thì không — nó tốn thời gian. Một frame Cycles đơn lẻ trên GPU desktop hiện đại có thể mất 5 đến 15 phút cho một scene nội thất. Nhân với 300 frame và bạn sẽ mất 25 đến 75 giờ render liên tục trên một máy. Đó là ba đến chín ngày với máy trạm không thể dùng để modeling, texturing hay lighting.

Một cloud render farm (hệ thống máy tính kết xuất) thay đổi phương trình này:

| Yếu tố | Render cục bộ | Cloud rendering |

|---|---|---|

| Chi phí phần cứng | $2.000–$5.000 ban đầu (GPU workstation) | Trả theo frame hoặc theo giờ |

| Thời gian render (300 frame) | 25–75 giờ | 1–4 giờ (phân tán) |

| Máy trạm sẵn có | Bị chiếm khi render | Tự do tiếp tục làm việc |

| Khả năng mở rộng | Giới hạn ở phần cứng hiện có | Mở rộng lên hàng trăm node |

| Điện và làm mát | Hóa đơn điện của bạn | Tính trong chi phí render |

Cloud rendering vs local rendering comparison for Blender — time, cost, and scalability

Cloud rendering đặc biệt có giá trị với người dùng Blender vì bản thân phần mềm miễn phí — chi phí sản xuất chính của bạn là phần cứng hoặc thời gian render. Chuyển bước render lên cloud giúp giữ ngân sách phần cứng ở mức thấp trong khi loại bỏ điểm nghẽn về thời gian.

Điều này áp dụng cho freelancer làm việc với deadline khách hàng, studio chạy nhiều dự án đồng thời, và sinh viên có kỹ năng nhưng thiếu phần cứng. Để so sánh toàn diện hơn giữa cloud và local rendering, bài viết phân tích tổng chi phí build vs. cloud (tiếng Anh) cung cấp đầy đủ số liệu chi tiết.

Chuẩn bị Scene Blender cho Cloud Rendering

Chuẩn bị scene là bước quan trọng nhất. Một scene render hoàn hảo trên máy cá nhân có thể thất bại trên farm nếu thiếu external asset, đường dẫn sai, hoặc các dependency chưa được pack.

Pack toàn bộ external data. Vào File > External Data > Automatically Pack Resources. Thao tác này nhúng texture, HDRI, font và các file ngoài trực tiếp vào file .blend. Nếu không làm vậy, các máy trên farm sẽ không tìm thấy texture và kết quả render sẽ sai — bề mặt màu xám, thiếu môi trường, hoặc lỗi hoàn toàn.

Dùng relative path. Trong Edit > Preferences > File Paths, xác nhận các đường dẫn mặc định là relative (//textures/ thay vì C:\Users\TenBan\textures\). Absolute path trỏ đến ổ đĩa cục bộ sẽ không hoạt động trên bất kỳ máy nào khác.

Bake simulation và cache. Các physics simulation (cloth, fluid, rigid body, smoke), particle system, và Geometry Nodes phụ thuộc vào simulation data phải được bake trước khi gửi. Farm render từng frame độc lập — nó không chạy simulation từ frame 1 để tạo ra frame 200. Nếu cache chưa bake, các frame sẽ thất bại hoặc render ở trạng thái nghỉ của đối tượng vật lý.

Đơn giản hóa hoặc xóa các phần tử chỉ hiển thị trong viewport. Viewport overlay, grease pencil annotation (trừ khi là một phần của render), và các object trên render layer bị tắt nên được dọn dẹp. Chúng không gây lỗi, nhưng có thể tăng kích thước file và gây nhầm lẫn khi debug.

Kiểm tra output setting. Trong panel Output Properties:

- Đặt resolution (khớp với yêu cầu delivery — không để mặc định 1920x1080 nếu dự án cần 4K)

- Đặt frame range (frame bắt đầu và kết thúc)

- Đặt output format: PNG cho ảnh tĩnh, OpenEXR cho workflow compositing, PNG sequence cho animation

- Đặt output path (farm thường ghi đè lên đây, nhưng hãy cài đặt đúng để an toàn)

Checklist nhanh trước khi upload:

- Toàn bộ texture đã pack (File > External Data > Automatically Pack Resources)

- Relative path đã bật

- Simulation và cache đã bake

- Render engine đã chọn đúng (Cycles hoặc Eevee)

- Output format và resolution đã cấu hình

- Camera đã chọn (camera đúng được đặt làm active)

- Frame range đã xác định

- Không có linked library bị thiếu (File > External Data > Report Missing Files)

Blender scene preparation steps for cloud rendering — pack textures, bake simulations, verify settings

Cycles trên Cloud Render Farm

Cycles là render engine chính được dùng cho Blender cloud rendering. Đây là một path tracer vật lý, và output của nó mang tính xác định — với cùng scene và cài đặt, bất kỳ máy nào cũng tạo ra kết quả giống nhau. Điều này khiến nó lý tưởng cho distributed rendering.

CPU vs. GPU rendering trên farm. Cycles hỗ trợ cả CPU và GPU rendering. Trên farm, lựa chọn phụ thuộc vào scene của bạn:

| Loại scene | Khuyến nghị | Lý do |

|---|---|---|

| Geometry nặng (hàng triệu polygon) | CPU | RAM hệ thống nhiều hơn (96–256 GB so với giới hạn GPU VRAM) |

| Volumetrics và subsurface scattering | CPU | CPU xử lý tốt những điều này; GPU acceleration không đồng đều |

| Vật liệu thông thường, geometry vừa phải | GPU | Thời gian render mỗi frame nhanh hơn đáng kể |

| Scene dưới 20–24 GB bộ nhớ | GPU | Vừa vặn trong GPU VRAM (RTX 5090: 32 GB) |

| Kết hợp (geometry nặng + GPU material) | CPU với GPU denoising | Kết hợp headroom bộ nhớ với denoising nhanh |

Trên farm của chúng tôi, khoảng 70% job Blender chạy trên CPU (Dual Intel Xeon E5-2699 V4, 96–256 GB RAM) và 30% trên GPU (NVIDIA RTX 5090, 32 GB VRAM). CPU rendering đáng tin cậy cho mọi scene bất kể bộ nhớ — bạn sẽ không bao giờ bị giới hạn VRAM. GPU rendering nhanh hơn mỗi frame nhưng yêu cầu scene vừa với bộ nhớ của GPU.

Các cài đặt Cycles quan trọng cho cloud rendering:

- Samples: Đặt số lượng sample mục tiêu. Với adaptive sampling bật (Render Properties > Sampling > Noise Threshold đặt 0.01), Cycles sẽ dừng sampling từng pixel khi chúng đạt chất lượng chấp nhận được. Điều này tiết kiệm thời gian ở các vùng đơn giản mà không giảm chất lượng ở vùng phức tạp.

- Denoising: Bật OpenImageDenoise (OIDN) làm denoiser. Nó chạy như một post-process và xử lý noise tốt ở số sample thấp hơn. Trên farm, điều này có nghĩa bạn có thể giảm số sample (ví dụ từ 4096 xuống 1024–2048) và để denoiser xử lý noise còn lại — cắt giảm thời gian render đáng kể.

- Light paths: Với hầu hết scene production, cài đặt light path mặc định là đủ. Nếu scene có caustics phức tạp hoặc đệ quy kính sâu, có thể cần tăng Transmission và Glossy bounce. Với nội thất kiến trúc, 8–12 total bounce là điểm khởi đầu phổ biến.

- Tile size: Trong Blender 3.0 trở lên, tile size được quản lý tự động. Bạn không cần đặt tile lớn cho GPU hay tile nhỏ cho CPU — engine tự xử lý.

Để tìm hiểu sâu về mọi panel render của Cycles, xem hướng dẫn tối ưu hóa render setting Blender (tiếng Anh).

Eevee và Cloud Rendering

Eevee (Eevee Next trong Blender 4.x) hoạt động khác với Cycles. Đây là một rasterization engine — nó render bằng các kỹ thuật screen-space, shadow map, và light probe thay vì ray tracing. Điều này khiến nó cực kỳ nhanh trên máy cục bộ nhưng đặt ra những vấn đề phức tạp trên render farm.

Vấn đề chính: Eevee render không dễ phân tán như Cycles render. Eevee phụ thuộc vào GPU context và một số trạng thái xuất phát từ viewport (light probe baking, screen-space reflection) có thể hoạt động khác nhau giữa các máy. Một số render farm hỗ trợ Eevee, nhưng kết quả có thể không khớp chính xác với render cục bộ của bạn.

Khuyến nghị của chúng tôi: Nếu dự án dùng Eevee, hãy render cục bộ — nó đủ nhanh để cloud rendering thường không cần thiết. Một animation 300 frame Eevee mất 5 giây mỗi frame sẽ hoàn thành trong 25 phút trên một GPU. Nếu thực sự cần farm rendering cho Eevee (animation rất dài hoặc độ phân giải rất cao), hãy xác nhận với render farm rằng họ hỗ trợ Eevee và test với một lô nhỏ frame trước khi gửi toàn bộ job.

Với công việc production cần cả chất lượng lẫn tốc độ, một cách tiếp cận phổ biến là duyệt trong Eevee trong quá trình production và render output cuối bằng Cycles trên farm. Điều này cho bạn phản hồi thời gian thực trong quá trình sáng tạo và kết quả chính xác về mặt vật lý để delivery.

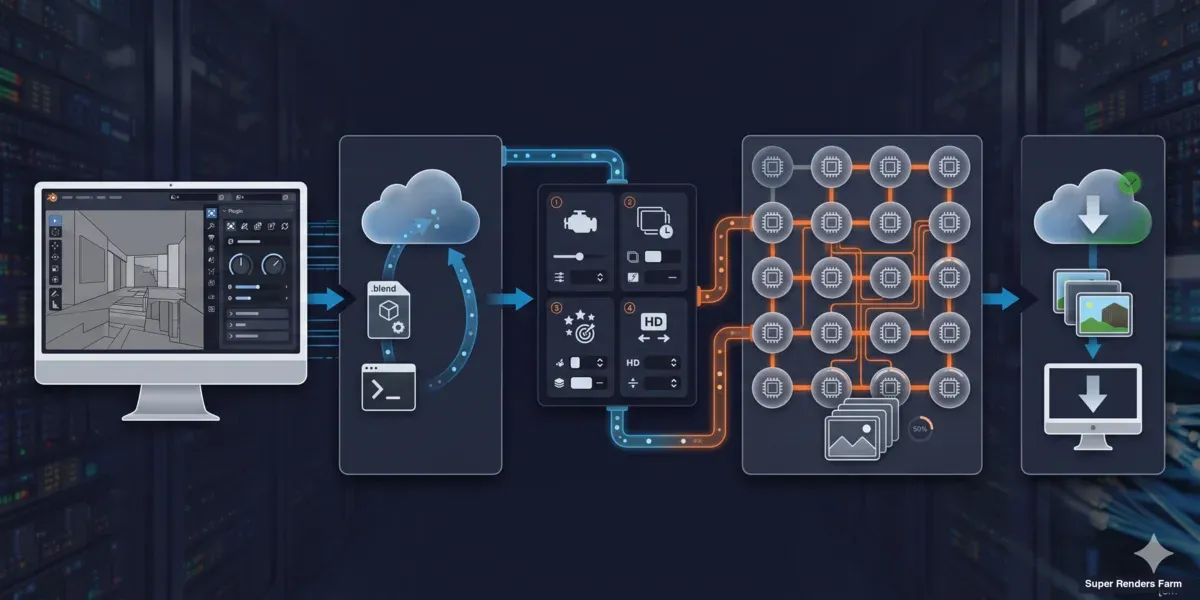

Quy Trình Gửi Job

Các bước cụ thể khác nhau tùy render farm, nhưng quy trình cốt lõi nhất quán trên tất cả. Đây là cách quy trình diễn ra trên một fully managed farm như Super Renders Farm:

Bước 1: Cài đặt plugin. Hầu hết render farm cung cấp một Blender add-on tích hợp trực tiếp vào giao diện của bạn. Trên farm của chúng tôi, plugin Super Renders Farm cho Blender thêm một panel trong render properties nơi bạn có thể cấu hình và gửi job mà không cần rời Blender.

Bước 2: Upload scene. Plugin đóng gói file .blend (với toàn bộ asset đã pack) và upload lên farm. Nếu scene dùng external asset không thể pack (ví dụ thư viện texture rất lớn, simulation cache lưu riêng), bạn có thể upload chúng dưới dạng archive riêng.

Bước 3: Cấu hình cài đặt farm. Chọn render engine (Cycles CPU hoặc GPU), frame range, output format, và mức độ ưu tiên. Giao diện của farm cũng có thể cho phép đặt giới hạn chi phí hoặc tùy chọn thông báo.

Bước 4: Gửi và theo dõi. Sau khi gửi, farm phân phối các frame trên các máy sẵn có. Bạn có thể theo dõi tiến độ trong panel plugin hoặc trên web dashboard của farm — xem frame hoàn thành, thời gian render mỗi frame, và log lỗi nếu có.

Bước 5: Tải kết quả về. Các frame hoàn chỉnh có thể tải về ngay khi chúng xong. Hầu hết farm hỗ trợ tự động tải qua plugin, nên các frame xuất hiện trong thư mục output mà không cần can thiệp thủ công.

Toàn bộ quy trình — từ lúc nhấn "Submit" đến khi có frame đầu tiên — thường mất 5 đến 15 phút tùy tốc độ upload và hàng đợi của farm.

Render farm submission workflow for Blender — install plugin, upload, configure, render, download

Licensing và Tương Thích Add-on

Một trong những lo ngại phổ biến nhất chúng tôi nghe từ các Blender artist khi chuyển sang cloud rendering: vấn đề add-on và commercial asset thì sao?

Bản thân Blender: Blender là open source (GPL). Không có hạn chế licensing nào — farm có thể chạy Blender miễn phí trên mọi máy.

Render engine: Cycles đi kèm với Blender và không có chi phí license bổ sung. Nếu bạn dùng engine của bên thứ ba như V-Ray cho Blender hay Redshift cho Blender, render farm cần có license đó. Trên farm của chúng tôi, chúng tôi bao gồm license V-Ray, Corona, Arnold, và Redshift trong chi phí render — bạn không cần cung cấp license riêng.

Add-on tạo geometry: Các add-on như Scatter, BagaPie, hoặc setup Geometry Nodes tạo geometry lúc render cần có mặt trên farm. Cách an toàn nhất là apply modifier và chuyển procedural geometry thành mesh trước khi gửi. Nếu add-on là commercial, hãy kiểm tra với farm — một số farm cài add-on phổ biến, số khác thì không.

Thư viện texture và asset: Asset từ các thư viện như Poliigon, Quixel Megascans, hay Poly Haven đều ổn miễn là chúng được pack vào file .blend. Farm không cần truy cập riêng vào những thư viện này — chỉ cần texture được nhúng trong file scene.

Tối Ưu Chi Phí

Chi phí cloud rendering phụ thuộc vào ba biến: thời gian render mỗi frame, số lượng frame, và loại phần cứng bạn dùng (CPU vs. GPU). Dưới đây là các cách thực tế để giảm chi phí:

1. Tối ưu scene trước khi upload. Mỗi phút tiết kiệm mỗi frame nhân lên trên toàn bộ job. Các điểm cải thiện lớn nhất:

- Bật adaptive sampling (Noise Threshold: 0.01) — có thể cắt giảm thời gian render 20–40%

- Dùng OpenImageDenoise và giảm sample count (2048 → 1024)

- Giới hạn light bounce ở mức scene thực sự cần (nội thất: 8–12, ngoại thất: 4–6)

- Tắt render layer không cần thiết cho output cuối

2. Test với lô nhỏ trước. Render 5 đến 10 frame trước khi gửi toàn bộ job. Điều này phát hiện lỗi sớm (thiếu texture, sai cài đặt, vấn đề bộ nhớ) và cho bạn ước tính chi phí mỗi frame chính xác. Nhân với tổng số frame và bạn có ngân sách trước khi cam kết.

3. Chọn tier phần cứng phù hợp. GPU rendering nhanh hơn mỗi frame nhưng đắt hơn mỗi giờ. CPU rendering chậm hơn mỗi frame nhưng rẻ hơn mỗi giờ. Với nhiều scene, tổng chi phí tương đương — nhưng nếu scene vừa với bộ nhớ GPU (dưới 20–24 GB), GPU thường hiệu quả hơn về chi phí vì thời gian render nhanh hơn bù đắp cho giá theo giờ cao hơn.

4. Dùng frame range một cách chiến lược. Nếu đang render animation, gửi theo dải (frame 1–100, 101–200) thay vì một job khổng lồ. Điều này cho phép phát hiện vấn đề sau lô đầu và điều chỉnh cài đặt trước khi tiêu hết toàn bộ ngân sách.

Để xem mô hình định giá chi tiết và tính toán chi phí, tham khảo hướng dẫn chi phí render farm theo frame (tiếng Anh) và trang bảng giá.

Các Vấn Đề Thường Gặp và Cách Xử Lý

Đây là những vấn đề chúng tôi gặp thường xuyên nhất với Blender cloud rendering job, dựa trên support ticket thực tế:

| Vấn đề | Nguyên nhân | Cách xử lý |

|---|---|---|

| Thiếu texture (bề mặt xám hoặc hồng) | Asset chưa pack | File > External Data > Pack All Into .blend |

| Render khác với local | Khác phiên bản Cycles | Khớp phiên bản Blender trên farm với phiên bản local |

| Hết bộ nhớ (GPU) | Scene vượt quá GPU VRAM | Chuyển sang CPU rendering hoặc đơn giản hóa geometry |

| Simulation không render đúng | Cache chưa bake | Bake toàn bộ simulation trước khi gửi |

| Ngẫu nhiên một số frame lỗi | Scene không ổn định (geometry lỗi hoặc driver expression) | Test local với đúng frame lỗi đó |

| Frame đen | Camera chưa đặt, hoặc render region đang bật | Kiểm tra active camera và tắt render region (Ctrl+Alt+B) |

| Render lâu hơn dự kiến | Sample count cao mà không có adaptive sampling | Bật adaptive sampling với noise threshold 0.01 |

| Màu sắc sai | Color management không khớp | Đặt View Transform thành AgX hoặc Filmic (khớp với cài đặt local) |

Nếu gặp vấn đề không có trong danh sách này, bước đầu tiên là render đúng frame lỗi đó trên máy cục bộ với cùng cài đặt. Nếu hoạt động được local, vấn đề có thể liên quan đến đóng gói file (thiếu asset hoặc đường dẫn sai). Nếu cũng lỗi trên local, thì vấn đề nằm trong cài đặt scene.

Geometry Nodes và Procedural Workflow

Hệ thống Geometry Nodes của Blender cần được chú ý đặc biệt khi cloud rendering. Procedural geometry được tạo ra lúc render hoạt động đúng trên farm — farm đánh giá node tree của bạn giống hệt máy local. Tuy nhiên có một số trường hợp ngoại lệ:

Simulation zone (mới trong Blender 4.x): Phải bake trước khi gửi, giống như simulation vật lý truyền thống. Farm render từng frame độc lập và không thể simulate tiến từ frame 1.

Biến thể random seed: Nếu setup Geometry Nodes dùng random distribution, output sẽ giống hệt trên farm miễn là giá trị seed giống nhau. Điều này được xử lý tự động — Cycles mang tính xác định.

Node tree nặng về hiệu năng: Setup procedural phức tạp có thể ngốn nhiều bộ nhớ. Nếu Geometry Nodes tạo ra hàng triệu instance lúc render, hãy theo dõi mức dùng bộ nhớ local trước. Scene dùng 60+ GB RAM local sẽ cần CPU rendering trên farm (có 96–256 GB RAM). GPU rendering sẽ thất bại nếu geometry được tạo ra vượt quá VRAM.

Bắt Đầu

Chuyển từ local rendering sang cloud rendering khá đơn giản khi scene đã được chuẩn bị đúng. Quy trình cho hầu hết Blender artist:

- Chuẩn bị scene — pack asset, bake simulation, kiểm tra cài đặt

- Cài đặt farm plugin — tải từ tài liệu của farm

- Gửi lô test — 5–10 frame để xác nhận mọi thứ render đúng

- Xem lại và điều chỉnh — kiểm tra chất lượng output, chi phí mỗi frame, thời gian render

- Gửi toàn bộ job — và tiếp tục làm việc trong khi farm xử lý render

Để có hướng dẫn về render setting Blender, hướng dẫn tối ưu render setting (tiếng Anh) bao gồm từng panel. Với workflow animation, hướng dẫn render animation (tiếng Anh) hướng dẫn qua frame sequence, output format, và temporal denoising.

Nếu đang đánh giá render farm cho Blender, so sánh Blender render farm năm 2026 (tiếng Anh) đề cập đến những điểm cần xem xét — mô hình định giá, hỗ trợ engine, và chất lượng plugin.

FAQ

Blender cloud rendering có hỗ trợ cả Cycles và Eevee không?

Cycles được hỗ trợ đầy đủ trên tất cả render farm lớn vì nó tạo ra kết quả xác định trên các phần cứng khác nhau. Eevee có hỗ trợ farm hạn chế do phụ thuộc vào GPU context — hầu hết farm khuyến nghị dùng Cycles cho distributed rendering. Nếu dự án dùng Eevee, render cục bộ thường nhanh hơn và đáng tin cậy hơn.

Tôi có cần cung cấp license Blender riêng cho cloud rendering không?

Không. Blender là phần mềm open source phát hành theo license GPL, nên render farm có thể chạy nó trên mọi máy mà không mất phí licensing. Đây là một trong những lợi thế của Blender cho cloud rendering — không có chi phí license theo node như một số ứng dụng DCC thương mại.

Làm thế nào để chuẩn bị file Blender cho render farm?

Pack toàn bộ external resource vào file .blend (File > External Data > Automatically Pack Resources), dùng relative path, bake toàn bộ simulation và physics cache, và đặt render engine, resolution, frame range, và output format trước khi upload. Chạy File > External Data > Report Missing Files để phát hiện các reference chưa được giải quyết.

Điều gì xảy ra với texture và add-on của tôi khi render trên cloud?

Texture được pack vào file .blend render đúng trên mọi máy farm. Với commercial add-on tạo geometry lúc render, cách an toàn nhất là apply modifier hoặc chuyển thành mesh trước khi gửi. Render engine của bên thứ ba (V-Ray, Redshift) cần license trên farm — farm fully managed thường bao gồm chi phí này trong giá render.

GPU hay CPU rendering tốt hơn cho Blender trên farm?

Phụ thuộc vào scene. GPU rendering (ví dụ NVIDIA RTX 5090) nhanh hơn mỗi frame và hiệu quả về chi phí cho scene vừa với VRAM (dưới 20–24 GB). CPU rendering (Dual Xeon, 96–256 GB RAM) xử lý được mọi scene bất kể bộ nhớ và đáng tin cậy hơn cho geometry nặng, volumetrics, và subsurface scattering. Nhiều farm cung cấp cả hai — test vài frame trên mỗi loại để so sánh.

Chi phí render dự án Blender trên cloud farm là bao nhiêu?

Chi phí phụ thuộc vào thời gian render mỗi frame, số lượng frame, và loại phần cứng. Ví dụ tham khảo: scene Cycles nội thất ở 2048 sample render trong 8 phút mỗi frame trên GPU có chi phí khoảng $0,30–0,80 mỗi frame. Một animation 300 frame sẽ tốn $90–240. Bật adaptive sampling và denoising có thể giảm 30–50%. Hầu hết farm cho phép chạy lô test nhỏ để ước tính tổng chi phí trước khi cam kết.

Có thể render Geometry Nodes và setup procedural trên cloud farm không?

Có. Geometry Nodes đánh giá giống hệt trên máy farm như trên máy local — output mang tính xác định. Điểm cần lưu ý chính là bộ nhớ: nếu setup procedural tạo ra hàng triệu instance, hãy đảm bảo scene vừa với giới hạn phần cứng của farm. Simulation zone (Blender 4.x) phải bake trước khi gửi, giống như simulation vật lý truyền thống.

Render farm hỗ trợ phiên bản Blender nào?

Hầu hết farm hỗ trợ tất cả bản phát hành stable và LTS chính thức. Trên farm của chúng tôi, chúng tôi duy trì các phiên bản Blender hiện tại và LTS và cập nhật trong vài ngày sau mỗi bản phát hành mới. Luôn khớp phiên bản Blender trên farm với phiên bản bạn đã dùng để tạo scene — sự không khớp phiên bản có thể gây ra khác biệt tinh tế trong output render, đặc biệt với shader và Geometry Nodes.

About Alice Harper

Blender and V-Ray specialist. Passionate about optimizing render workflows, sharing tips, and educating the 3D community to achieve photorealistic results faster.