Come renderizzare animazioni in Blender: guida completa per artisti 3D

Panoramica

Introduzione

Renderizzare un singolo frame in Blender è semplice. Renderizzare un'animazione — centinaia o migliaia di frame con qualità costante, senza flickering e con tempi di rendering gestibili — è una sfida completamente diversa. Ogni inefficienza nelle impostazioni di rendering viene moltiplicata per il numero di frame, e i problemi invisibili in un'immagine statica (rumore temporale, flickering della luce, perdite di memoria) diventano evidenti nel movimento.

Renderizziamo animazioni Blender ogni giorno sulla nostra render farm, e i problemi che vediamo più spesso si riducono agli stessi errori: formato di output sbagliato, conteggi di campioni non ottimizzati, denoising che introduce artefatti temporali e memoria che cresce frame dopo frame fino al crash del job. Questa guida illustra l'intero processo di configurazione e rendering di un'animazione in Blender 4.x, dalle prime impostazioni di rendering all'output finale — che tu stia renderizzando in locale o inviando frame a una cloud render farm.

Gli esempi qui utilizzano Blender 4.2 LTS con Cycles, anche se trattiamo i workflow di Eevee dove pertinente. Se hai bisogno di un riferimento più approfondito sulle singole impostazioni di rendering, la nostra guida all'ottimizzazione delle impostazioni di rendering di Blender copre ogni pannello in dettaglio.

Scegliere il motore di rendering per l'animazione

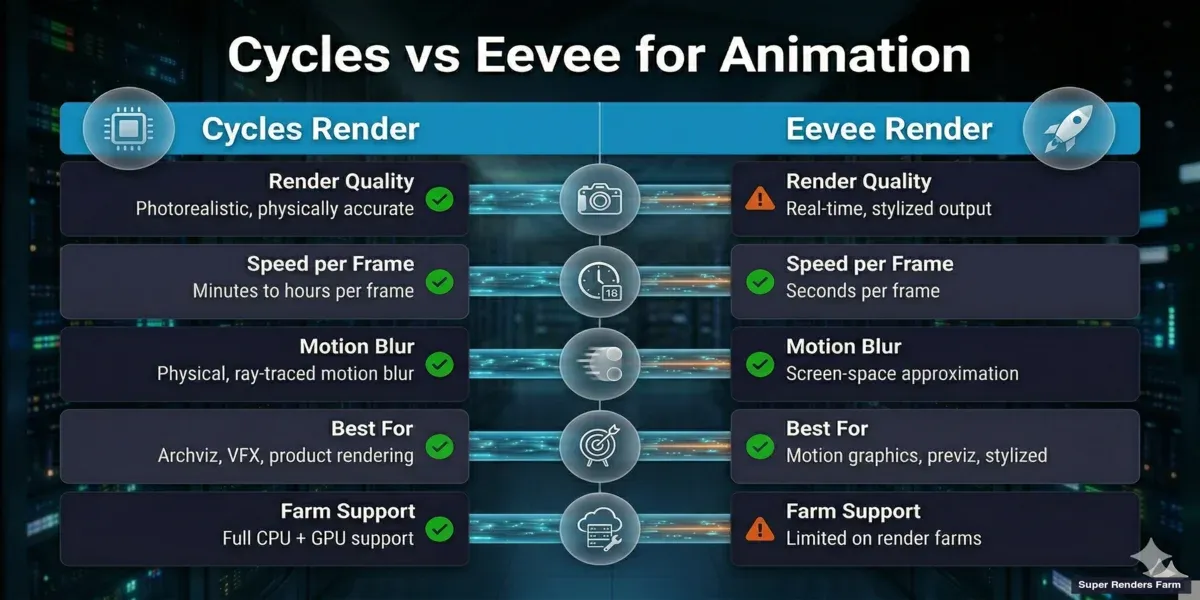

Blender include due motori di produzione: Cycles (path tracing) ed Eevee (rasterizzazione). Entrambi possono renderizzare animazioni, ma hanno punti di forza diversi per il lavoro in movimento.

Cycles è la scelta principale per l'animazione fotorealistica. Gestisce illuminazione complessa, riflessioni, volumetrici e motion blur fisicamente — il che significa ottenere risultati corretti senza simulare nulla artificialmente. Il compromesso è il tempo di rendering. Un singolo frame Cycles può richiedere da 2 a 30 minuti a seconda della complessità, quindi un'animazione di 300 frame può richiedere da 10 ore a diversi giorni su una singola macchina.

Eevee (Eevee Next in Blender 4.x) renderizza a velocità quasi in tempo reale usando la rasterizzazione. È eccellente per motion graphics, animazione stilizzata e previz. Eevee gestisce animazioni dove non è richiesto fotorealismo assoluto — sequenze di titoli, loop astratti, fly-through architettonici dove la velocità è più importante della precisione del ray tracing. Il manuale di Blender tratta entrambi i motori in dettaglio.

Quando usare quale:

| Scenario | Motore consigliato |

|---|---|

| Walkthrough architettonico (fotorealistico) | Cycles |

| Piatto rotante prodotto con riflessioni/caustiche | Cycles |

| Motion graphics / sequenza di titoli | Eevee |

| Animazione personaggi (look stilizzato) | Eevee |

| Piatti di compositing VFX | Cycles |

| Previz rapido prima del render finale | Eevee, poi Cycles per i finali |

| Animazione di lunga durata (1.000+ frame, deadline stretta) | Cycles su render farm |

Un workflow comune che vediamo: gli artisti iterano su timing e movimenti di camera con Eevee (secondi per frame), poi passano a Cycles per il render finale. Questo fa risparmiare ore di attesa durante la fase creativa.

Infografica di confronto — Cycles vs Eevee per il rendering di animazioni in Blender

Configurare il rendering dell'animazione

Prima di fare clic su Render Animation, configura queste impostazioni principali nel pannello Output Properties.

Intervallo di frame

Imposta il frame iniziale e il frame finale in Output Properties > Format. Per impostazione predefinita, Blender usa i frame 1-250. Per il lavoro in produzione, impostali esattamente in base alla tua timeline — renderizzare frame extra è uno spreco di tempo e i frame mancanti significano ri-renderizzare.

L'impostazione Frame Step renderizza ogni N-esimo frame. Impostandolo su 2 si renderizzano i frame 1, 3, 5, 7... utile per i render di test per verificare il timing a metà costo. Reimposta sempre su 1 per i render finali.

Frame rate

Adatta al formato di consegna target del tuo progetto:

| Caso d'uso | Frame rate |

|---|---|

| Cinema / cinematico | 24 fps |

| Broadcast europeo (PAL) | 25 fps |

| Broadcast nordamericano (NTSC) | 30 fps |

| Video web / YouTube | 24 o 30 fps |

| Slow motion (renderizzato a velocità normale) | 60 fps |

| Cinematiche di videogiochi | 30 o 60 fps |

Impostalo in Output Properties > Format > Frame Rate. Cambiare il frame rate dopo aver aggiunto keyframe richiede un retime — imposta prima di iniziare ad animare.

Risoluzione

Imposta la risoluzione di output finale in Output Properties > Format. Risoluzioni di produzione comuni:

- 1920 × 1080 (Full HD) — standard per la maggior parte delle consegne

- 2560 × 1440 (2K) — sempre più comune per il web

- 3840 × 2160 (4K UHD) — consegna di alta gamma, 4× i pixel dell'HD

Usa il cursore Resolution Percentage (predefinito al 100%) per fare render di test a risoluzione ridotta. Renderizzare al 50% dà un quarto dei pixel — molto più veloce per verificare composizione e timing.

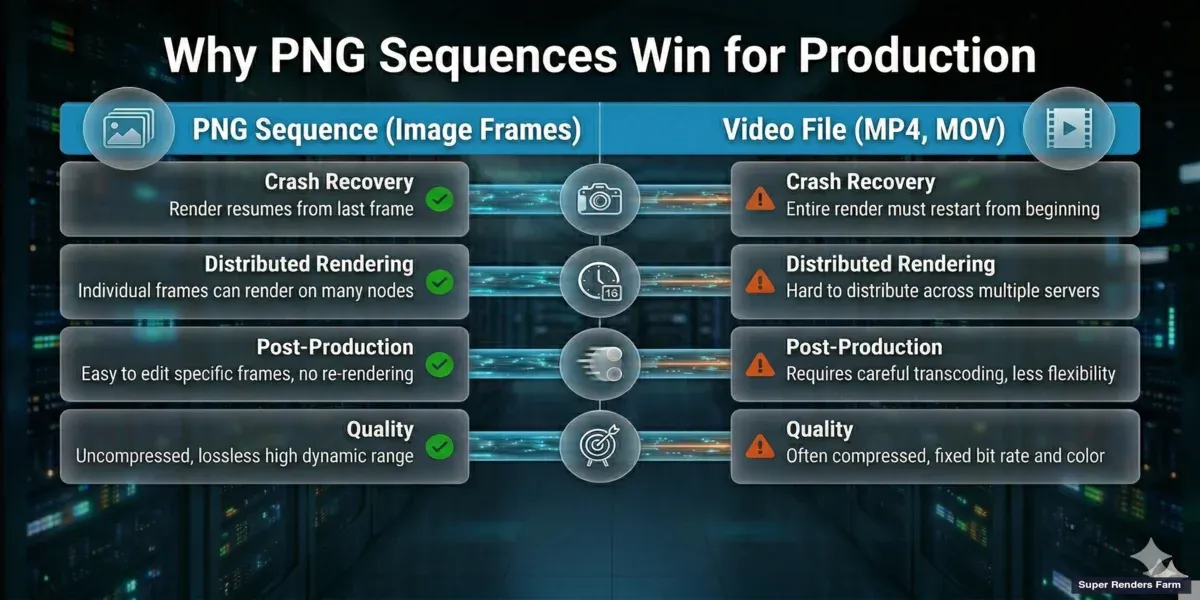

Formato di output: sequenza PNG vs file video

Questa è una delle decisioni più importanti per il rendering di animazioni, e la risposta è quasi sempre: renderizza in una sequenza di immagini PNG, non in un file video.

Perché le sequenze PNG sono preferite in produzione:

-

Recupero da crash. Se Blender va in crash al frame 847 di un'animazione di 1.200 frame, hai 846 frame completati. Cambia il frame iniziale a 847 e riprendi. Con un file video, un crash significa ricominciare dall'inizio — l'intero file potrebbe essere corrotto.

-

Rendering distribuito. Le render farm dividono l'animazione su molte macchine. La macchina A renderizza i frame 1-100, la macchina B i frame 101-200, e così via. Ogni macchina scrive file di immagine individuali. Questo è impossibile con un singolo contenitore video.

-

Flessibilità in post-produzione. Le sequenze di immagini vengono importate frame per frame nel software di compositing (After Effects, Nuke, DaVinci Resolve), consentendo color grading non distruttivo, retime ed effetti. Puoi anche sostituire singoli frame difettosi senza ri-renderizzare l'intera sequenza.

-

Nessuna perdita di qualità. Il PNG è senza perdite. I codec video come H.264 sono con perdite — comprimono i frame e introducono artefatti, specialmente nelle aree con dettagli fini o sfumature sottili.

Quando l'output video è accettabile: Anteprime veloci, render di test, bozze per i social media dove la comodità supera la qualità. Usa FFmpeg (contenitore video) con un codec veloce per questi casi.

Impostazioni di output consigliate:

| Impostazione | Valore | Perché |

|---|---|---|

| Formato file | PNG | Senza perdite, universale, sicuro contro i crash |

| Modalità colore | RGBA | Preserva la trasparenza per il compositing |

| Profondità colore | 16 bit | Maggiore margine per il color grading |

| Compressione | 15% | Buon equilibrio tra dimensione file e velocità di scrittura |

| Percorso di output | //render/nome-progetto/frame_#### | Relativo al file .blend, organizzato |

Infografica che confronta la sequenza di immagini PNG e l'output di file video per il rendering di animazioni in Blender

Per i workflow OpenEXR (compositing VFX con pass di rendering), imposta il formato file su OpenEXR Multilayer. Questo incorpora tutti i pass di rendering (diffuse, glossy, shadow, mist, cryptomatte) in un singolo file per frame — essenziale per le pipeline di compositing professionale. Consulta la nostra guida ai workflow EXR e Cryptomatte per i dettagli.

Ottimizzare le impostazioni di rendering Cycles per l'animazione

Il rendering di animazioni moltiplica ogni inefficienza. Un'impostazione che aggiunge 30 secondi per frame aggiunge 2,5 ore a un'animazione di 300 frame. Ecco come ottimizzare Cycles specificamente per il lavoro di animazione.

Campionamento e campionamento adattivo

Cycles in Blender 4.x usa il campionamento adattivo per impostazione predefinita. Invece di renderizzare un numero fisso di campioni per pixel, smette di campionare i pixel che hanno già convergito — le aree luminose e ben illuminate convergono rapidamente mentre gli angoli bui e le caustiche necessitano di più campioni.

Per l'animazione, configura:

- Render Samples: Imposta questo come limite superiore. Da 256 a 512 è un buon intervallo per la maggior parte delle scene con denoising attivato. Gli interni complessi possono necessitare di 1.024.

- Noise Threshold: 0,01 è un buon valore predefinito. Valori inferiori (0,005) producono frame più puliti ma aumentano il tempo di rendering. Per l'animazione, la coerenza tra i frame è più importante della pulizia assoluta — un livello di rumore costante viene ripulito dal denoising, mentre i livelli variabili causano un effetto di "nuoto" visibile nel video finale.

- Min Samples: Mantieni a 32 o superiore per evitare che il campionamento adattivo faccia scorciatoie sui pixel iniziali. Valori inferiori a 16 possono causare artefatti nelle aree di gradiente.

Denoising per l'animazione

Il denoising è fondamentale per l'animazione — permette di renderizzare con conteggi di campioni inferiori mantenendo la qualità visiva. Ma non tutti i denoiser gestiscono l'animazione allo stesso modo.

- OpenImageDenoise (OIDN): Basato su CPU, incluso con Blender. Produce risultati eccellenti ed è l'opzione più stabile per l'animazione. Il comportamento coerente frame per frame minimizza il flickering temporale. Usalo come impostazione predefinita.

- OptiX Denoiser: Basato su GPU (solo NVIDIA). Più veloce di OIDN ma può produrre risultati leggermente diversi frame per frame, il che può causare un leggero flickering nelle animazioni. Più adatto per render di anteprima dove la velocità è importante.

Per l'animazione in produzione, consigliamo: OIDN con impostazione di qualità "Accurate", applicato come pass di rendering (non viewport). Attivalo in Render Properties > Sampling > Denoise. Assicurati che il pass Denoising Data sia attivato in View Layer Properties > Passes > Data — questo fornisce al denoiser informazioni aggiuntive (pass normal e albedo) per risultati migliori.

Percorsi di luce

Le impostazioni dei percorsi di luce controllano quante volte i raggi rimbalzano nella scena. Per l'animazione:

- Rimbalzi totali: 8-12 per la maggior parte delle scene. Gli interni architettonici con molte superfici riflettenti possono necessitare di 12-16.

- Rimbalzi di trasparenza: Aumenta a 16-32 se la scena ha vetri sovrapposti, tende o materiali traslucidi stratificati. Rimbalzi di trasparenza insufficienti causano artefatti neri che flickerano nell'animazione.

- Rimbalzi di volume: Aumenta sopra 0 solo se hai nebbia volumetrica, fumo o fuoco. Ogni rimbalzo di volume aggiunge tempo di rendering significativo.

Motion blur

Cycles gestisce il motion blur fisicamente — campiona la scena in più punti temporali all'interno dell'intervallo di otturatore di ogni frame. Questo è preciso ma costoso.

- Shutter: 0,5 è lo standard (otturatore a 180 gradi). Valori superiori a 1,0 creano sfocatura esagerata. Valori inferiori a 0,25 potrebbero non produrre sfocatura visibile a 24fps.

- Steps: Controlla la qualità del motion blur. Il predefinito (1) funziona per il movimento semplice. Aumenta a 3-5 per oggetti in movimento veloce o con deformazione complessa.

- Position: "Center on Frame" è lo standard. "Start on Frame" sposta la direzione della sfocatura.

Se il motion blur aggiunge più del 30% al tempo di rendering, considera di renderizzare senza e aggiungerlo in post usando un pass vettoriale — esporta il pass di rendering Vector e applica la sfocatura direzionale nel compositor.

Gestione della memoria per animazioni lunghe

I problemi di memoria sono la causa più comune dei fallimenti nei render di animazione. Una scena che renderizza il frame 1 senza problemi può andare in crash al frame 400 perché l'utilizzo della memoria cresce nel tempo.

Perché la memoria cresce durante il rendering di animazioni:

- Caching delle texture: Blender memorizza nella cache le texture in memoria. Le texture animate o le texture procedurali che cambiano per frame accumulano dati nella cache.

- Sistemi di particelle: Le simulazioni di capelli, tessuto e fluido memorizzano lo stato per frame. Le simulazioni lunghe possono consumare gigabyte.

- Cronologia annullamento: Blender mantiene i passaggi di annullamento in memoria per impostazione predefinita. Per il rendering, questo non serve a nulla.

Come prevenire i crash di memoria:

- Calcola le simulazioni prima di renderizzare (Bake). Esegui il bake dei sistemi di particelle, tessuto, fluido e corpo rigido sul disco. Questo impedisce a Blender di ricalcolare la fisica per ogni frame e mantiene la memoria prevedibile.

- Riduci i passaggi di annullamento. In Preferences > System, riduci Undo Steps a 0 durante il rendering. Questo libera la memoria che altrimenti si accumulerebbe.

- Usa Persistent Images (Render Properties > Performance). Questo mantiene i dati delle texture in memoria tra i frame invece di ricaricarli — sembra controintuitivo, ma previene la frammentazione della memoria che causa perdite graduali.

- Abilita il rendering CPU + GPU (Cycles). In Preferences > System > Cycles Render Devices, abilita sia CPU che GPU. Questo distribuisce il carico di lavoro e può prevenire il traboccamento della memoria GPU su scene complesse. Consulta la nostra guida al rendering GPU vs CPU per capire quando ha senso.

- Renderizza in blocchi. Se renderizzi in locale su una macchina con RAM limitata, dividi l'animazione in blocchi (frame 1-100, 101-200, ecc.) e riavvia Blender tra un blocco e l'altro. Questo cancella la memoria accumulata.

Renderizzare animazioni Blender su una render farm

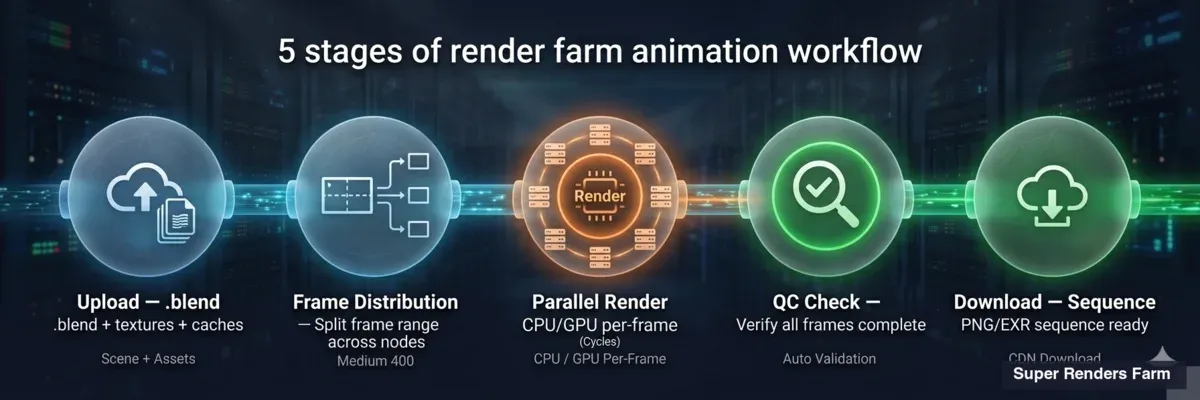

Per animazioni con più di qualche centinaio di frame, il rendering in locale è spesso impraticabile. Un'animazione Cycles di 500 frame a 5 minuti per frame richiede 42 ore su una singola macchina. Su una render farm, gli stessi 500 frame si distribuiscono su decine di macchine e sono pronti in ore invece che in giorni.

Diagramma della pipeline che mostra il workflow render farm per animazioni Blender — upload, distribuzione, rendering, download

Come funziona il rendering su farm per le animazioni:

- Carichi il file .blend e tutti gli asset collegati (texture, cache, HDRI) tramite lo strumento di invio della farm.

- La farm divide il range di frame tra le macchine disponibili. Ogni macchina renderizza i suoi frame assegnati in modo indipendente.

- I frame completati vengono caricati nella cartella di download come file PNG o EXR — un file per frame.

- Scarichi la sequenza completa e la monti in locale.

Questo è esattamente il motivo per cui le sequenze PNG sono importanti. Ogni macchina scrive file di frame individuali. Non c'è un singolo contenitore video che possa corrompersi.

Cosa supportiamo per Blender nella nostra farm:

- Cycles (CPU e GPU): Supporto completo. Il rendering CPU usa la nostra flotta di 20.000+ core. Il rendering GPU usa schede NVIDIA RTX 5090 con 32 GB di VRAM — sufficiente per la maggior parte delle scene di produzione. Includiamo automaticamente il licensing di Blender (Blender è open source, quindi non ci sono costi di licenza).

- Eevee: Supporto limitato sulle render farm. Eevee dipende molto dal contesto GPU e dallo stato del viewport in modi che rendono il rendering distribuito inaffidabile. Funziona per alcune scene, ma consigliamo Cycles per le submission su farm. Se il tuo progetto richiede Eevee, testa prima un piccolo range di frame.

Preparare il file .blend per la submission su farm:

- Impacchetta i file esterni. Vai a File > External Data > Pack Resources. Questo incorpora texture e HDRI nel file .blend in modo che vengano trasferiti correttamente alla farm.

- Calcola tutte le simulazioni (Bake). Le cache di particelle, tessuto e fluido devono essere calcolate e salvate — le macchine della farm non possono eseguire la simulazione da zero.

- Imposta l'output su PNG o EXR. Non impostare mai l'output su un formato video quando invii a una farm — ogni macchina cercherebbe di scrivere nello stesso file video.

- Rimuovi gli oggetti solo per viewport. Disabilita o elimina gli oggetti usati solo per il riferimento del viewport (empty con immagini di sfondo, mesh guida). Sprecano memoria sulle macchine della farm.

- Testa 3 frame. Renderizza in locale i frame dall'inizio, dalla metà e dalla fine della timeline per verificare le impostazioni prima di caricare. Questo individua i problemi che sprecherebbero crediti della farm.

Per una guida dettagliata su prezzi e invio, consulta la nostra pagina prezzi e la guida render farm per Blender.

Renderizzare più angoli di camera

Molti progetti di animazione richiedono render da più angoli di camera — walkthrough architettonici con diversi punti di vista, piatti rotanti di prodotti con primi piani e inquadrature larghe, o piatti VFX da più telecamere virtuali.

Blender supporta questo tramite configurazioni multi-camera basate su scene e rendering in batch con Python. Invece di duplicare l'intera scena, crei copie di scena collegate che condividono la geometria ma fanno riferimento a diverse fotocamere attive. Uno script Python itera poi attraverso le scene e renderizza ciascuna in sequenza.

Questo approccio funziona bene sia sulle macchine locali che sulle render farm. Su una farm, ogni angolo di camera può essere inviato come un job separato, renderizzando tutti gli angoli in parallelo. Trattiamo la configurazione completa nella nostra guida al rendering di più fotocamere in Blender.

Problemi comuni nel rendering di animazioni

Flickering tra frame

Il flickering è solitamente causato da campioni insufficienti nelle aree con illuminazione complessa. Il campionamento adattivo può convergere diversamente frame per frame, creando una variazione di luminosità visibile. Soluzione: aumenta Min Samples a 64, stringi Noise Threshold a 0,005 e assicurati che il denoising sia attivato. Per le scene ricche di fireflies (picchi di pixel luminosi da caustiche), abilita il valore Clamp Indirect a 10 — questo limita la luminosità dei percorsi di luce indiretti.

Artefatti di denoising nel movimento

I denoiser IA possono produrre risultati leggermente diversi per frame, causando un effetto di texture "in ebollizione" o "nuotante" nelle aree che dovrebbero essere statiche. Soluzione: usa OpenImageDenoise (OIDN) invece di OptiX per i render finali. OIDN è più coerente temporalmente. Assicurati anche di renderizzare i pass Denoising Data (albedo + normal) — senza di essi, il denoiser ha meno informazioni e produce risultati meno stabili.

Crash di memoria sui frame tardivi

Se il rendering fallisce al frame N ma il frame 1 si renderizza correttamente, la memoria si sta probabilmente accumulando. Consulta la sezione Gestione della memoria sopra. Il colpevole più comune sono le simulazioni non calcolate — calcola tutto sul disco prima di renderizzare.

L'animazione renderizza diversamente dall'anteprima del viewport

Questo di solito accade perché le impostazioni del viewport e del rendering divergono. Controlla: risoluzione di rendering vs risoluzione del viewport, campioni di rendering vs campioni del viewport, e camera di rendering vs camera del viewport. Il rendering usa sempre la camera attiva impostata in Scene Properties > Camera — non la camera attraverso cui stai guardando nel viewport.

L'output di rendering sovrascrive i frame precedenti

Se il percorso di output non include un segnaposto del numero di frame (####), Blender sovrascrive lo stesso file a ogni frame. Assicurati che il percorso di output termini con qualcosa come frame_#### — il #### viene sostituito dal numero del frame (completato a 4 cifre).

Assemblare il video finale

Dopo aver renderizzato la sequenza PNG o EXR, devi codificarla in un file video per la consegna.

Usare il Video Sequence Editor (VSE) di Blender:

- Apri un nuovo file Blender (o passa all'area di lavoro Video Editing).

- Nel Sequencer, vai a Add > Image/Sequence. Naviga nella cartella di render e seleziona tutti i frame.

- Imposta la risoluzione di output e il frame rate per corrispondere alle impostazioni di rendering.

- Imposta Output su FFmpeg Video, contenitore MP4, codec H.264, qualità di codifica Alta o Senza perdite.

- Render Animation (Ctrl+F12) — questo codifica la sequenza in un video.

Usare FFmpeg direttamente (più veloce, senza interfaccia grafica):

ffmpeg -framerate 24 -i frame_%04d.png -c:v libx264 -crf 18 -pix_fmt yuv420p output.mp4

-framerate 24: corrisponde al frame rate del progetto-crf 18: qualità (più basso = migliore, 18 è visivamente senza perdite)-pix_fmt yuv420p: garantisce la compatibilità con la maggior parte dei player

Per la consegna professionale, renderizza in ProRes (per la consegna in montaggio) o DNxHR (per la trasmissione) invece di H.264.

FAQ

Q: Quale formato di output dovrei usare per renderizzare animazioni in Blender? A: La sequenza di immagini PNG è il formato consigliato per il rendering di animazioni in produzione. Il PNG è senza perdite, consente il recupero da crash (conservi tutti i frame renderizzati prima di un crash), ed è compatibile con i workflow delle render farm dove più macchine renderizzano frame simultaneamente. Codifica in H.264 o ProRes solo come passo finale di consegna.

Q: Come ridurre il tempo di rendering per le animazioni Blender? A: Abilita il campionamento adattivo con un Noise Threshold di 0,01, usa OpenImageDenoise per permettere conteggi di campioni inferiori (256-512 invece di 2.048+), riduci i rimbalzi dei percorsi di luce in base alla complessità della scena e renderizza alla risoluzione minima necessaria. Per progetti grandi, usare una render farm con migliaia di core CPU può ridurre giorni di rendering a ore.

Q: Posso renderizzare animazioni Eevee su una render farm? A: Eevee ha un supporto limitato sulle render farm perché dipende dal contesto GPU e dallo stato del viewport che non sempre si trasferisce in modo pulito tra le macchine. Cycles è il motore consigliato per il rendering su farm. Se il tuo progetto richiede Eevee, testa prima un piccolo range di frame sulla farm per verificare la compatibilità prima di impegnarti in un render completo.

Q: Perché i frame della mia animazione Blender flickerano? A: Il flickering è tipicamente causato dal campionamento adattivo che converge diversamente per frame, producendo livelli di rumore variabili che il denoiser gestisce in modo incoerente. Correggi aumentando Min Samples a 64, stringendo Noise Threshold a 0,005, usando OpenImageDenoise (che è più stabile temporalmente di OptiX) e abilitando i pass Denoising Data per un input migliore al denoiser.

Q: Dovrei usare il rendering GPU o CPU per le animazioni Blender? A: Entrambi funzionano bene per le animazioni Cycles. Il rendering GPU (CUDA, OptiX, HIP) è più veloce per frame ma limitato dalla VRAM — le scene complesse con molte texture possono superare la memoria GPU. Il rendering CPU gestisce qualsiasi dimensione di scena ma è più lento per frame. Per le render farm, le flotte CPU offrono più core di calcolo totali, mentre le macchine GPU offrono tempi di frame individuali più veloci. Molti artisti usano il rendering GPU per scene semplici e CPU per quelle complesse.

Q: Come riprendo un render di animazione fallito in Blender? A: Se hai renderizzato in una sequenza di immagini PNG o EXR, controlla la cartella di output per trovare l'ultimo frame renderizzato con successo. Imposta il frame iniziale al numero di frame successivo e renderizza di nuovo — Blender continua da dove si è fermato. Se abiliti "Overwrite" in Output Properties, i frame esistenti vengono ri-renderizzati; disabilita per saltare i frame già presenti sul disco.

Q: Cosa causa i crash di memoria durante i render di animazione lunghi? A: La memoria si accumula tipicamente da simulazioni non calcolate (particelle, tessuto, fluido), caching delle texture e cronologia di annullamento. Calcola tutte le simulazioni sul disco prima di renderizzare, riduci Undo Steps a 0 in Preferences e abilita Persistent Images nelle impostazioni di performance del rendering. Per animazioni molto lunghe, considera di renderizzare in blocchi e riavviare Blender tra loro per cancellare la memoria accumulata.

Q: Di quanti campioni ho bisogno per il rendering di animazioni in Cycles? A: Con denoising abilitato, da 256 a 512 campioni e un Noise Threshold di 0,01 producono risultati puliti per la maggior parte delle scene. Gli interni architettonici con caustiche complesse possono necessitare di 1.024 campioni. La chiave per l'animazione è la coerenza — un livello di rumore uniforme tra i frame viene denoisato in modo più prevedibile rispetto ai livelli variabili. Usa il campionamento adattivo per lasciare che Blender allochi campioni extra solo dove necessario.

About Alice Harper

Blender and V-Ray specialist. Passionate about optimizing render workflows, sharing tips, and educating the 3D community to achieve photorealistic results faster.