Build vs Cloud Render Farm: The Real Cost Breakdown You Need Before Deciding

Overview

Introduction

Every year, studios face the same decision: build a local render farm or pay for cloud rendering? The answer seems simple — run the numbers, pick the cheaper option. But most cost comparisons miss half the picture.

We have been operating a production render farm for over 15 years, supporting V-Ray, Corona, Redshift, Arnold, and every major DCC application. In that time, we have watched studios build farms, outgrow them, replace them, and sometimes abandon them entirely. The pattern is consistent: the upfront hardware purchase feels like the big decision, but it is the ongoing costs — render engine licenses, software maintenance, power, cooling, IT time — that determine whether building was actually worth it.

This guide breaks down every cost category involved in building a local render farm in 2026 and compares it against cloud rendering. We use real numbers: current GPU street prices (not MSRP), actual electricity rates, licensing fees pulled directly from vendor pricing pages, and operational overhead based on what we see studios actually spend. No hypotheticals, no cherry-picked scenarios.

Whether you are a 5-person archviz studio rendering 50 hours a month or a 30-person VFX house pushing 500+, the math works differently. The goal here is to give you the framework to run your own numbers accurately — not to tell you what to do.

The True Cost of Building a Render Farm in 2026

Hardware costs depend entirely on whether you are building a CPU-based farm (for V-Ray, Corona, Arnold CPU workflows) or a GPU farm (for Redshift, Octane, V-Ray GPU). Both have gotten more expensive in 2026.

CPU Render Farm (10 Nodes)

A production-grade CPU render node in 2026 typically runs dual Intel Xeon processors with 96-256 GB RAM. Per-node cost ranges from $5,000 to $7,300 depending on configuration.

| Component | Per Node | 10 Nodes |

|---|---|---|

| Dual Xeon E5-2699 V4 (44 cores) | $3,000-$4,500 | $30,000-$45,000 |

| 128 GB ECC RAM | $800-$1,200 | $8,000-$12,000 |

| 1 TB NVMe + case + PSU | $700-$1,000 | $7,000-$10,000 |

| Network switch + cabling | — | $500-$1,000 |

| Total | $4,500-$6,700 | $45,500-$68,000 |

GPU Render Farm (5 Nodes)

GPU farms are where 2026 pricing has shifted significantly. The NVIDIA RTX 5090 launched at a $1,999 MSRP, but actual retail prices in April 2026 range from $2,500 to $3,800 due to GDDR7 memory shortages and AI compute demand. Custom and liquid-cooled variants exceed $5,000.

| Component | Per Node (1 GPU) | 5 Nodes |

|---|---|---|

| RTX 5090 (32 GB VRAM) | $2,500-$3,800 | $12,500-$19,000 |

| CPU + motherboard + 64 GB RAM | $2,000-$2,800 | $10,000-$14,000 |

| 1200W PSU + case + cooling | $500-$800 | $2,500-$4,000 |

| Network + shared storage | — | $3,000-$5,000 |

| Total | $5,000-$7,400 | $28,000-$42,000 |

For a dual-GPU-per-node configuration — common for Redshift and Octane production work — multiply GPU costs accordingly. A 5-node, 10-GPU farm can exceed $60,000 in hardware alone.

These are day-one costs. Hardware starts depreciating immediately, with GPU farms losing roughly 25% of their value annually. An RTX 5090 farm built today will deliver approximately one-third the throughput of whatever ships in 2029, just as RTX 3090 farms from 2022 now deliver roughly a third of current RTX 5090 performance in V-Ray GPU workloads.

The Licensing Trap

Hardware gets all the attention, but licensing is the cost that surprises studios most. Every render engine charges per-node licensing for farm use, and these fees recur annually.

Based on Q1 2026 vendor pricing:

| Engine | Annual License | 10-Node Farm Cost |

|---|---|---|

| V-Ray | $208/year (single); $167-$188/year (volume) | $1,670-$2,080 |

| Corona | $172/year (single); $140-$154/year (volume) | $1,400-$1,720 |

| Redshift | $264/year (individual); $299/year Teams (min 3 seats) | $2,640-$2,990 |

| Arnold | $415/year (includes 5 render nodes) | $830+ (2 packs) |

| Octane | Enterprise pricing required for farm use | Varies |

A 10-node GPU farm running Redshift Teams costs $2,990 per year in render engine licensing alone — before you render a single frame.

DCC application licensing adds another layer. 3ds Max and Maya permit free command-line rendering on farm nodes. Cinema 4D requires Team Render licenses per node. After Effects requires a full subscription per render node unless using a dedicated render engine.

For a mixed 10-node farm, total software licensing typically runs $2,000 to $5,500 annually — a recurring expense that never stops, whether the farm is rendering or sitting idle.

Hidden Operational Costs

The costs below are real, documented, and consistently underestimated by studios building their first farm.

Electricity and Cooling

A 10-node CPU farm drawing 500W per node at 50% average utilization, using the US commercial electricity average of $0.17/kWh:

- Annual electricity: 10 nodes x 500W x 0.5 utilization x 8,760 hours x $0.17/kWh = $3,723

- Cooling overhead (typically 30-40% of compute power cost): $1,100-$1,500

- Total: $4,800-$5,200/year

GPU nodes draw more power. An RTX 5090 at full load pulls 575W for the GPU alone, plus CPU and system power. A 5-node GPU farm at 50% utilization runs approximately $3,500-$4,800/year in electricity and cooling.

These costs scale linearly with utilization — and they apply even during non-productive loads like failed renders, test frames, and software updates.

IT Administration

Someone has to maintain the farm. Software updates, driver patches, troubleshooting failed jobs, managing storage, handling license servers — this is not optional work.

Studios typically report 5-10 hours per week of farm maintenance. At a conservative $50/hour IT rate, that is $13,000-$26,000 per year in labor. For studios without dedicated IT staff, this work falls on artists, which carries an even higher opportunity cost — a senior 3D artist spending time on render node troubleshooting instead of billable project work.

Version Management and Storage

Keeping identical software versions, plugins, and assets synchronized across 10 nodes requires coordination. A V-Ray point release or a Forest Pack update needs testing on one node before rolling out to all — and incompatible versions across nodes produce inconsistent render output.

Fast shared storage (10 GbE NAS or better) costs $3,000-$8,000 upfront plus ongoing maintenance. Without it, artists manually copy project files to each node, which is slow and error-prone.

Total Cost of Ownership: A Real Example

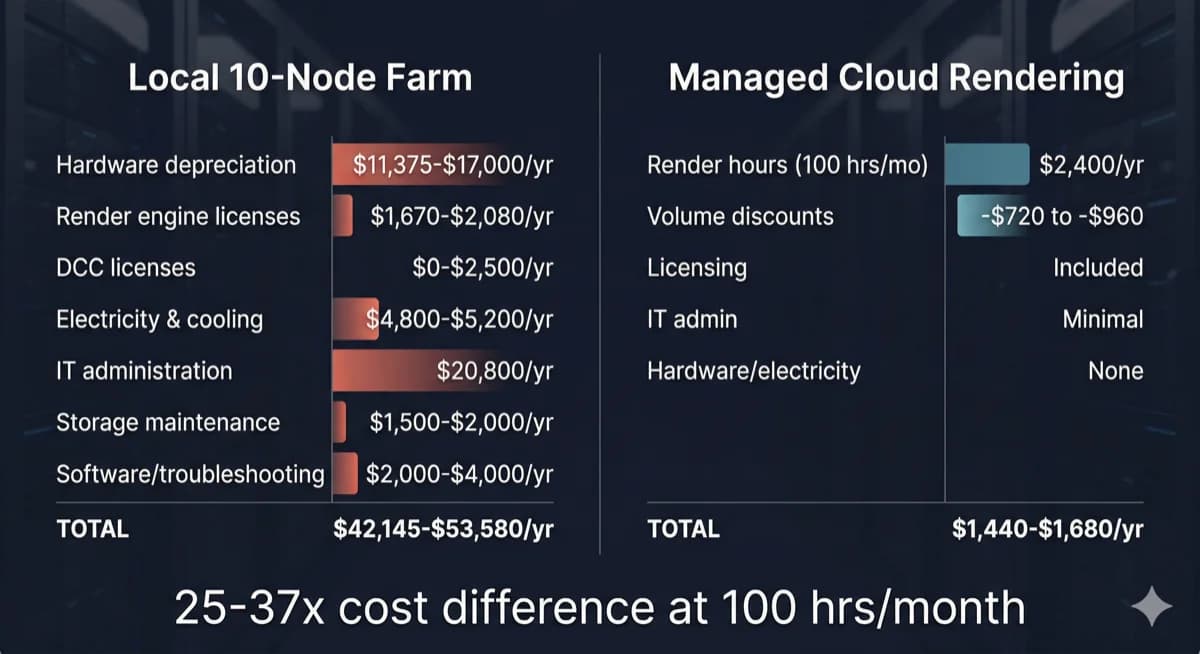

Total cost of ownership comparison between a local 10-node render farm and managed cloud rendering

Consider a 12-person architectural visualization studio rendering 80-120 V-Ray CPU hours monthly — a common profile among our clients.

Option A: Build a 10-Node CPU Farm

| Cost Category | Annual Cost |

|---|---|

| Hardware (depreciated over 4 years) | $11,375-$17,000 |

| V-Ray licenses (10 nodes) | $1,670-$2,080 |

| DCC licenses (if applicable) | $0-$2,500 |

| Electricity and cooling | $4,800-$5,200 |

| IT administration (8 hrs/week) | $20,800 |

| Shared storage maintenance | $1,500-$2,000 |

| Software troubleshooting, updates | $2,000-$4,000 |

| Annual Total | $42,145-$53,580 |

Option B: Managed Cloud Rendering

| Cost Category | Annual Cost |

|---|---|

| Render hours (100 hrs/month x $2/hr average) | $2,400/year |

| Volume discounts (30-40% typical) | -$720 to -$960 |

| Render engine licensing | Included |

| IT administration | Minimal |

| Hardware, electricity, cooling | None |

| Annual Total | $1,440-$1,680 |

The difference is not marginal. At 100 CPU-hours per month, cloud rendering costs roughly 3-4% of what a local farm costs when you include the full TCO. Even if you triple the cloud usage to 300 hours per month, the annual cloud cost ($4,320-$5,040) remains a fraction of the local farm's $42,000-$54,000.

For a detailed per-frame cost breakdown across different render engines and scene complexities, see our render farm cost per frame guide.

But I Already Own My Workstation

This is the most common objection we hear, and it deserves a direct answer.

If you already own render hardware, the purchase cost is a sunk cost — it is spent regardless of what you do next. The question is not "did I waste money?" but "what is the most productive use of this hardware going forward?"

Three factors matter:

Depreciation is ongoing. Your hardware loses value every month. An RTX 3090 purchased in 2022 for $1,500 is worth roughly $400 today and delivers a fraction of current-generation performance. Holding onto aging hardware does not preserve your investment — it extends it past the point of competitive returns.

Opportunity cost is real. Every hour your workstation spends rendering is an hour it cannot be used for modeling, texturing, or scene setup. For a solo artist, this means choosing between productivity and rendering. Cloud rendering eliminates this trade-off entirely — your workstation stays available for interactive work while renders run on dedicated infrastructure.

Maintenance costs continue. Electricity, cooling, software updates, and troubleshooting do not stop because the hardware is paid off. A "free" render node still costs $1,500-$3,000 per year in operational expenses when you account for power, licensing, and the time spent managing it.

The most practical approach for studios with existing hardware: use your local machines for quick test renders and viewport previews, then send final production renders to cloud infrastructure. This hybrid model maximizes the value of hardware you already own while avoiding the scaling limitations and maintenance burden of a full local farm.

When Building Your Own Farm Actually Makes Sense

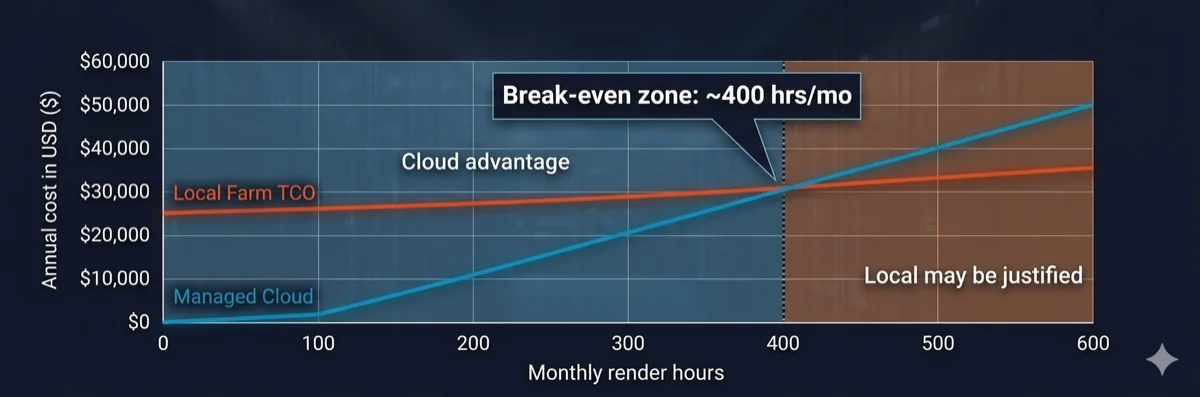

Break-even analysis chart showing cloud rendering is cheaper below 400 monthly render hours

Local render farms are not always the wrong choice. They make economic sense under specific conditions:

- Consistent high volume: Your studio renders 400+ hours per month, every month, with minimal seasonal variation. At this volume, the per-hour cost of owned hardware drops below cloud rates.

- Data security requirements: Compliance mandates (government contracts, NDA-heavy entertainment projects) require all data to remain on-premises with no external transfer.

- Real-time iterative workflows: Rapid-fire test renders where network latency to a cloud farm would slow iteration cycles — though this typically applies to individual workstations, not farm-scale rendering.

- Existing IT infrastructure: You already have dedicated IT staff, rack space, power capacity, and cooling — reducing the marginal cost of adding render nodes.

If fewer than three of these apply to your studio, the numbers almost always favor cloud rendering. For a deeper look at how render farms process jobs and where cloud fits into production pipelines, see our technical guide to how render farms work.

Cloud Rendering: What You Actually Pay For

Not all cloud rendering services work the same way. The distinction that matters most is between IaaS (Infrastructure as a Service) platforms and fully managed farms.

IaaS cloud rendering gives you remote access to hardware — essentially renting a machine. You install your own software, manage your own licenses, troubleshoot your own issues. The hourly rate looks lower, but you absorb the operational overhead: license costs, software configuration, job management, and debugging. Studios using IaaS platforms typically report 5-15 hours per month of setup and troubleshooting time — the same IT burden as a local farm, just on someone else's hardware.

Fully managed cloud rendering includes everything: software pre-installed, render engine licenses bundled into the per-hour rate, job management handled by the farm's team, and technical support when scenes fail. The operational overhead that costs local farm operators $13,000-$26,000 per year in IT labor is absorbed by the service.

A third option — a render farm that needs no remote desktop — sidesteps the DIY cost model entirely by rolling hardware, licensing, and orchestration into a per-job fee.

On our farm, we include V-Ray, Corona, Redshift, Arnold, and all major DCC applications in the rendering cost. There are no separate license fees, no software installation steps, and no per-node licensing charges. You upload your scene, we render it, you download the output. For studios comparing options, understanding this distinction is critical — a "$1.50/hour" IaaS rate with $3,000/year in licensing and 10 hours/month of admin time is not cheaper than a "$2.00/hour" fully managed rate with everything included.

To understand the differences between managed and self-service models in detail, read our managed vs DIY cloud rendering comparison. For a closer look at how GPU workloads factor into rendering costs, see our cloud rendering overview.

Managed Cloud Farm vs Remote Desktop Rental: The Hidden Math

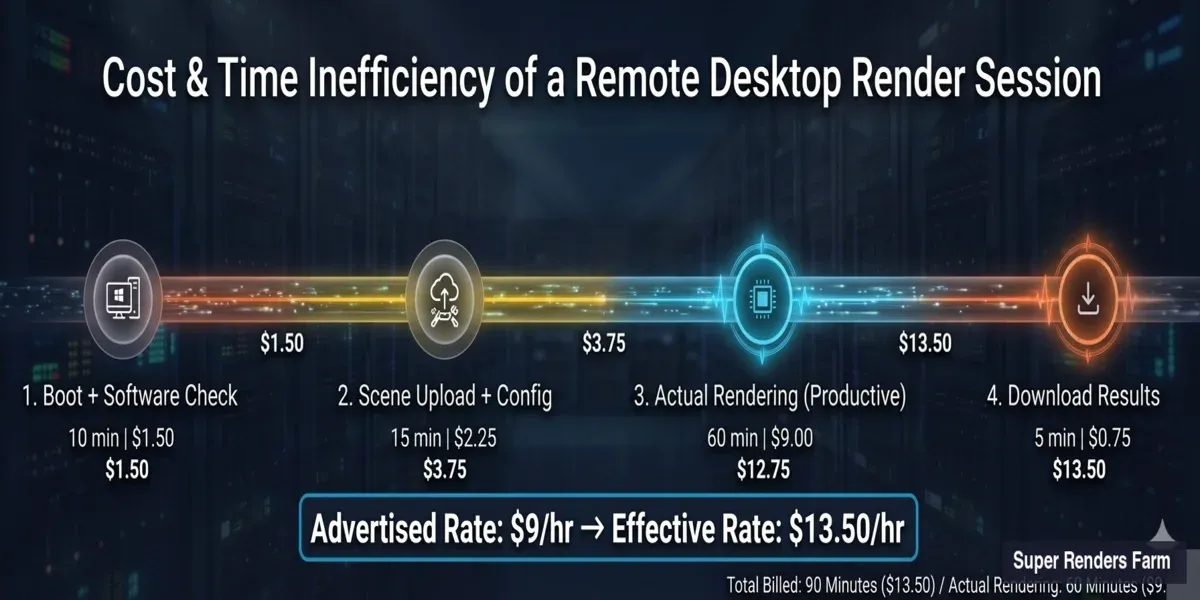

The previous section introduced the IaaS vs managed distinction. But the cost gap between these two models is wider than most comparisons suggest — because standard pricing pages show the advertised hourly rate, not the effective hourly rate. Understanding the difference requires looking at what happens during a typical remote desktop rendering session.

License Seat Consumption

When you render on a remote desktop, your own DCC license occupies that machine. One Autodesk subscription seat running on a cloud VM means one fewer artist working locally. For a studio with five Maya seats, sending two to remote rendering machines leaves three available for interactive work — a 40% capacity reduction during render sessions.

Managed cloud farms handle licensing differently. Render engine licenses (V-Ray, Corona, Redshift, Arnold) are bundled into the service — the farm operates its own license pool. Your local seats remain available for artists to continue modeling, texturing, and scene setup while renders run on the farm's infrastructure.

Billed Idle Time

Remote desktop billing starts when the machine boots, not when rendering starts. A typical session includes non-rendering time that you still pay for:

- Scene upload: Transferring project files, textures, and assets to the remote machine (5-20 minutes depending on project size and connection speed)

- Software configuration: Verifying render engine version, loading plugins, checking scene compatibility (5-15 minutes)

- Post-render download: Pulling finished frames back to your local storage (5-15 minutes)

On a managed farm, you submit a scene file through the farm's upload system. The farm handles file distribution, software configuration, and job queuing internally. You are billed for actual render time — the clock starts when frames begin processing, not when your upload starts.

Setup Overhead Per Session

Most remote desktop platforms require verifying software versions, reinstalling plugins if they have been updated, and configuring render settings each session. Studios report 30-60 minutes of non-rendering setup per session — time that is fully billed at the advertised hourly rate.

This overhead compounds with frequency. A studio running 20 render sessions per month at 30 minutes of setup each spends 10 hours monthly on configuration alone — before a single frame renders.

Effective Hourly Rate: The Real Math

Here is how the advertised rate translates to the effective rate for a typical GPU rendering session on a remote desktop platform:

| Component | Time | Cost at $9/hr |

|---|---|---|

| Machine boot + software check | 10 min | $1.50 |

| Scene upload and configuration | 15 min | $2.25 |

| Actual rendering | 60 min | $9.00 |

| Post-render download | 5 min | $0.75 |

| Total session | 90 min | $13.50 |

Effective hourly rate for actual rendering: $13.50 — 50% higher than the advertised $9/hour. And this calculation assumes everything works on the first attempt. Failed renders, plugin conflicts, or version mismatches add more billed non-productive time.

Effective cost breakdown of a remote desktop rendering session — $9 per hour advertised rate becomes $13.50 effective when including boot, upload, and download time

Three Models Side by Side

| Factor | Build Local | Remote Desktop (IaaS) | Managed Cloud Farm |

|---|---|---|---|

| Upfront hardware cost | $28,000-$68,000 | $0 | $0 |

| Monthly cost (100 render hrs) | $3,500-$4,500 (amortized TCO) | $900-$1,350+ (effective rate) | $120-$200 (per-hour rate) |

| Render engine licenses | You buy ($2,000-$5,500/yr) | You buy (your seats) | Included |

| DCC license impact | No impact (dedicated nodes) | Consumes your seats | No impact (farm's licenses) |

| IT overhead per month | 20-40 hours | 10-20 hours | Minimal (submit and download) |

| Setup time per session | One-time (but ongoing maintenance) | 30-60 min per session | None (pre-configured) |

| Hardware control | Full | Partial (provider's specs) | None (provider manages) |

| Scalability | Buy more hardware | Rent more VMs | Automatic (farm scales) |

| Suited for | Studios with 400+ hrs/month, dedicated IT staff, data security mandates | Studios with DevOps expertise, custom pipeline requirements, 50+ node scale | Studios under 20 people, no IT staff, variable rendering volume |

The right choice depends on your team size, technical capacity, and rendering volume. Each model serves a different operational profile — there is no universally correct answer.

For studios that have decided on cloud rendering and need to compare specific services, our render farm evaluation guide provides a structured checklist covering eight key criteria.

Decision Framework

| Factor | Local Farm Favored | Cloud Rendering Favored |

|---|---|---|

| Monthly render hours | 400+ consistent | Under 300 or variable |

| Team size | 20+ with dedicated IT | 3-15 without IT staff |

| Utilization pattern | Steady, predictable year-round | Seasonal, project-based |

| Data requirements | Air-gapped, on-premises mandated | Standard NDA sufficient |

| Budget model | Large CapEx acceptable | Prefer OpEx, pay-per-use |

| Hardware refresh | Accept 3-4 year depreciation cycles | Want current-generation hardware |

| Pipeline staffing | Dedicated TD or IT admin available | No pipeline engineer on team |

| Render engine licensing | Already own perpetual licenses | Prefer licensing included |

Studios rendering 150-300 hours monthly with seasonal variation often find a hybrid model works well: local nodes handle iterative test renders while cloud infrastructure scales for final production deadlines. For more on evaluating cloud render farm options, see our guide to choosing a cloud render farm.

To understand how different cloud render farms charge — and how to calculate effective cost versus advertised rates across six pricing models — see our render farm pricing models comparison.

Often-Missed Costs: Summary Table (10-Node Farm)

| Cost Category | Annual Range | Applies To |

|---|---|---|

| Render engine licenses | $1,670-$2,990 | Local + IaaS |

| DCC licenses (render nodes) | $0-$2,500 | Local + IaaS |

| Electricity and cooling | $4,800-$10,000 | Local only |

| IT administration labor | $13,000-$26,000+ | Local + IaaS |

| Plugin/version management | $2,000-$5,000 | Local + IaaS |

| Idle capacity waste (60-75%) | 60-75% of hardware value | Local only |

| Hardware depreciation | ~25% annually (3-4 year cycle) | Local only |

Total annual TCO for a 10-node local farm: $42,000-$54,000+ — with IT labor and hardware depreciation as the two largest line items. Cloud rendering at equivalent usage (100 hrs/month) runs $1,440-$2,400/year on a fully managed platform.

View our pricing page for current rates and volume discount tiers.

FAQ

Q: How much does it cost to build a 10-node render farm in 2026? A: A 10-node CPU farm (dual Xeon, 128 GB RAM per node) costs $45,500-$68,000 in hardware. A 5-node GPU farm with RTX 5090 cards costs $28,000-$42,000 at current retail prices. These figures do not include ongoing costs like licensing ($2,000-$5,500/year), electricity ($4,800-$10,000/year), or IT labor ($13,000-$26,000/year).

Q: What is the biggest hidden cost of running a local render farm? A: IT administration time. Studios consistently underestimate the 5-10 hours per week required for software updates, driver patches, job troubleshooting, storage management, and license server maintenance. At $50/hour, that is $13,000-$26,000 annually — often exceeding the cost of electricity and licensing combined.

Q: At what point does building a local render farm break even versus cloud rendering? A: For most studios, the break-even point is around 400 consistent monthly render hours when comparing against a fully managed cloud farm. Below that threshold, cloud rendering is substantially cheaper when you include the full total cost of ownership — hardware depreciation, licensing, electricity, cooling, and IT labor.

Q: Do render engines require separate licenses for each farm node? A: Yes. V-Ray, Corona, Redshift, Arnold, and Octane all require per-node licensing for render farm use. Annual costs range from $140-$415 per node depending on the engine and volume pricing tier. This is a recurring annual expense that applies whether the node is rendering or idle.

Q: Is cloud rendering more expensive than using my own GPU workstation? A: For occasional rendering (under 200 hours per month), cloud rendering is significantly cheaper when you account for total cost of ownership. A single RTX 5090 workstation costs $5,000-$8,000 to build, plus $1,500-$3,000 annually in electricity, licensing, and maintenance. Cloud rendering at 50 hours per month costs roughly $1,200/year on a managed platform with all licensing included.

Q: What is the difference between IaaS cloud rendering and fully managed cloud rendering? A: IaaS gives you access to remote hardware — you install software, manage licenses, and troubleshoot issues yourself. Fully managed farms include all software, render engine licenses, and technical support in the per-hour rate. The hourly rate for IaaS looks lower, but the operational overhead (licensing, configuration, debugging) typically adds $3,000-$8,000 per year in hidden costs.

Q: How fast does render farm hardware depreciate? A: GPU render hardware depreciates roughly 25% annually, with a practical lifespan of 3-4 years before performance falls significantly behind current-generation alternatives. An RTX 3090 farm built in 2022 now delivers approximately one-third the throughput of current RTX 5090 nodes in GPU rendering workloads like V-Ray GPU and Redshift.

Q: Can I use a hybrid approach — local hardware plus cloud rendering? A: Yes, and many studios find this the most practical model. Use local workstations for quick test renders and viewport previews, then send final production frames to a cloud farm. This approach keeps your workstation available for interactive work, avoids the capital expense of building a full farm, and lets you scale rendering capacity for deadlines without maintaining idle hardware year-round.

Q: Is renting a remote desktop cheaper than a managed render farm? A: The advertised hourly rate for remote desktop rental is typically lower, but the effective cost is higher. A $9/hour remote desktop session includes billed idle time for scene upload, software setup, and post-render download — turning a 60-minute render into a 90-minute billed session at $13.50 effective hourly rate. Managed farms bill for actual render time only, include all software licenses, and require no per-session configuration. For studios rendering under 300 hours per month, the total cost of a managed farm is typically lower than remote desktop rental when you factor in licensing, setup overhead, and lost DCC license seats.

Q: Do I need my own software licenses to render on a cloud render farm? A: It depends on the type of cloud rendering service. On a remote desktop or IaaS platform, yes — your own DCC and render engine licenses occupy the remote machine, reducing seats available for local artists. On a fully managed cloud render farm, no — the farm provides its own license pool for supported render engines (V-Ray, Corona, Redshift, Arnold, and others). Your local licenses remain available for interactive work while the farm handles rendering with its own software stack.

About Alice Harper

Blender and V-Ray specialist. Passionate about optimizing render workflows, sharing tips, and educating the 3D community to achieve photorealistic results faster.