2026년 3D 렌더링을 위한 최적의 GPU: 아티스트를 위한 실용적인 티어 목록

개요

소개

2026년에 3D 렌더링용 GPU를 선택하는 일은 단순히 코어 수가 가장 높은 카드를 고르는 것보다 훨씬 복잡해요. VRAM 용량, 렌더링 엔진 호환성, 드라이버 안정성, 그리고 본인의 워크플로까지 모두 중요한 요소예요. V-Ray로 건축 시각화를 작업하는 스튜디오에게 "맞는" GPU와 Redshift를 사용하는 모션 디자이너에게 맞는 GPU는 완전히 다를 수 있어요.

저희는 10년 넘게 GPU 렌더링 인프라를 운영해 왔으며, 아티스트들에게 가장 많이 받는 질문은 원시 TFLOPS에 관한 것이 아니에요. 특정 카드가 메모리 부족 없이 자신의 씬을 처리할 수 있는지에 관한 것이죠. 이 가이드는 수천 건의 프로덕션 작업에서 관찰한 내용을 반영했어요. 어떤 GPU가 실제 워크로드를 안정적으로 처리하는지, VRAM 한계가 실제로 어디서 발생하는지, 그리고 다양한 렌더링 엔진이 특정 하드웨어와 어떻게 상호작용하는지에 대해 다뤄요.

이것은 제휴 리뷰가 아니에요. 저희는 GPU를 판매하지 않아요. 저희가 제공할 수 있는 것은 GPU 렌더링 인프라의 RTX 5090 카드를 포함한 혼합 GPU 플릿을 대규모로 운영하면서 얻은 운영 데이터예요. 공개적으로 이용 가능한 벤치마크 및 엔진 문서와 결합한 실질적인 정보죠.

GPU 렌더링의 원리 (간략한 개요)

GPU 렌더링은 그래픽 카드의 대규모 병렬 아키텍처를 활용하여 광선 경로를 동시에 추적해요. CPU가 16~64개의 코어에서 광선을 처리하는 것과 달리, 현대 GPU는 수천 개의 CUDA 코어(NVIDIA) 또는 Stream Processor(AMD)를 동일한 작업에 투입해요. 패스 트레이싱의 병렬 처리 특성에서 이는 직접적인 속도 향상으로 이어져요.

2026년 렌더링에서 중요한 세 가지 코어 유형:

- CUDA/셰이더 코어 — 일반적인 레이 트레이싱 계산 처리

- RT 코어 — 레이-삼각형 교차 테스트(BVH 순회)를 위한 전용 하드웨어

- 텐서 코어 — AI 디노이징 가속화. 현재 프로덕션 파이프라인의 표준

실질적인 결과: RTX 5090 단일 카드로 듀얼 Xeon 워크스테이션이 1520분 걸릴 프레임을 단 24분 만에 렌더링할 수 있어요. 하지만 이 속도 우위에는 엄격한 제약이 따라요. 전체 씬(지오메트리, 텍스처, 디스플레이스먼트, 라이트 캐시)이 GPU의 VRAM 안에 들어가야 한다는 거예요. 바로 이것이 렌더링을 위한 GPU 선택을 게임용 GPU 선택과 근본적으로 다르게 만드는 이유예요.

GPU 대 CPU 렌더링 방식의 심층 비교는 GPU 렌더링 vs CPU 렌더링 가이드를 참고해요.

3D 렌더링을 위한 GPU 티어 목록 (2026)

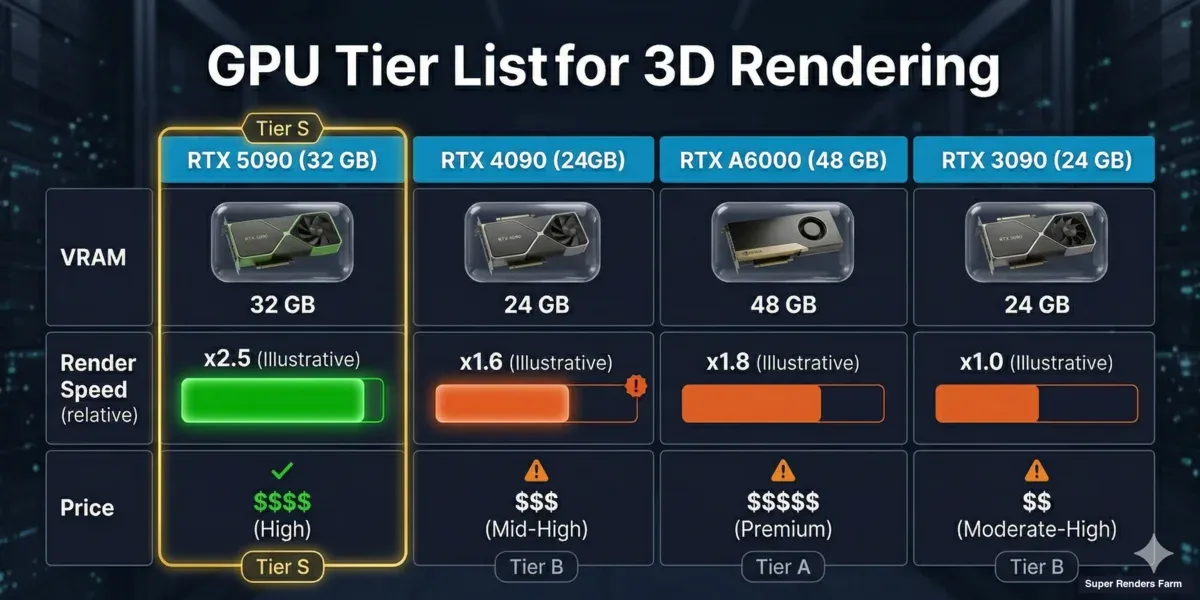

프로덕션 성능 데이터, 드라이버 성숙도, 가격 대비 VRAM 비율을 기반으로 현재 GPU들이 전문 3D 렌더링에서 어떻게 자리매김하는지 정리했어요.

S 티어 — 프로덕션 핵심 카드

| GPU | VRAM | CUDA 코어 | RT 코어 | TDP | 시장가 (USD) | 최적 용도 |

|---|---|---|---|---|---|---|

| NVIDIA RTX 5090 | 32 GB GDDR7 | 21,760 | 170 | 575W | $1,999 | 헤비 프로덕션 렌더링, 대형 씬 |

| NVIDIA RTX 4090 | 24 GB GDDR6X | 16,384 | 128 | 450W | $1,599-1,799 | 프로덕션 렌더링, 우수한 가격/VRAM 비율 |

RTX 5090은 현재 컨슈머급 GPU 렌더링의 최상위 카드예요. 32 GB GDDR7은 24 GB 카드에서는 넘칠 수 있는 씬도 처리해요. 4K 텍스처가 빽빽한 건축 시각화 인테리어, 적당한 식생 스캐터, 멀티 라이트 설정 모두 가능해요. 대부분의 프로덕션 시나리오에서 24 GB(4090)에서 32 GB(5090)로의 VRAM 증가는 원시 컴퓨팅 성능 향상보다 더 중요해요.

RTX 4090은 여전히 탁월한 가성비를 자랑해요. 24 GB로 대부분의 프로덕션 씬을 처리하며, CUDA 코어 수는 두 세대 전 워크스테이션 카드가 필요했던 렌더링 성능을 제공해요.

GPU 비교 차트 — RTX 5090, RTX 4090, RTX A6000, RTX 3090의 VRAM 및 3D 렌더링 성능 등급 표시

A 티어 — 전문가용 / 멀티 GPU

| GPU | VRAM | CUDA 코어 | RT 코어 | TDP | 시장가 (USD) | 최적 용도 |

|---|---|---|---|---|---|---|

| NVIDIA RTX A6000 | 48 GB GDDR6 | 10,752 | 84 | 300W | $4,200-4,600 | 최대 VRAM 씬, VFX, 시뮬레이션 |

| NVIDIA RTX 5080 | 16 GB GDDR7 | 10,752 | 84 | 360W | $999 | 중간 예산 프로덕션, 중간 규모 씬 |

| NVIDIA RTX 4080 SUPER | 16 GB GDDR6X | 10,240 | 80 | 320W | $979-1,099 | RTX 5080과 유사, 중고 시장 강세 |

A6000이 존재하는 이유는 하나예요. 48 GB VRAM이죠. 달러당 원시 렌더링 속도는 컨슈머 카드보다 떨어지지만, 씬이 30 GB 이상의 GPU 메모리를 요구할 때는 단일 카드 옵션 중 유일한 선택이에요. 무거운 시뮬레이션 캐시와 디스플레이스먼트가 많은 환경을 다루는 VFX 스튜디오는 이런 여유 공간이 일상적으로 필요해요.

RTX 5080과 4080 SUPER는 흥미로운 교차점에 있어요. 16 GB는 제품 시각화, 단순한 인테리어, 모션 디자인에는 충분하지만, 건축 시각화 익스테리어나 텍스처 로드가 무거운 작업에는 빠듯해요. GPU 엔진만 사용하는 아티스트라면 텍스처 해상도와 씬 복잡도가 증가함에 따라 16 GB가 충분할지 진지하게 고민해 봐야 해요.

B 티어 — 입문 프로덕션 / 룩데브

| GPU | VRAM | CUDA 코어 | RT 코어 | TDP | 시장가 (USD) | 최적 용도 |

|---|---|---|---|---|---|---|

| NVIDIA RTX 4070 Ti SUPER | 16 GB GDDR6X | 8,448 | 66 | 285W | $749-829 | 예산 프로덕션, 룩데브 이터레이션 |

| NVIDIA RTX 3090 Ti | 24 GB GDDR6X | 10,752 | 84 | 450W | $800-1,000 (중고) | 중고 시장 가성비, VRAM당 가격 우수 |

| NVIDIA RTX 3090 | 24 GB GDDR6X | 10,496 | 82 | 350W | $650-850 (중고) | 3090 Ti와 동일한 24 GB, 저렴한 중고가 |

RTX 3090/3090 Ti는 특별히 언급할 가치가 있어요. 중고 시장에서 $1,000 미만의 24 GB 카드는 렌더링에서 VRAM 대비 가격이 매우 뛰어나요. 원시 컴퓨팅은 현재 세대보다 느려요. Redshift 기준으로 RTX 4090의 약 60~70% 수준이지만, 씬 호환성(VRAM에 맞는지 여부)이 프로덕션 작업에서 원시 속도보다 더 중요한 경우가 많아요. 많은 스튜디오가 3090을 구체적으로 사용하는 이유는 24 GB 덕분에 현재 세대 16 GB 카드에서 넘치는 씬을 렌더링할 수 있기 때문이에요.

C 티어 — 학습용 / 경량 프로덕션

| GPU | VRAM | 참고 사항 |

|---|---|---|

| NVIDIA RTX 4060 Ti 16 GB | 16 GB | 적당한 VRAM, 느린 컴퓨팅 — Redshift/Octane 학습에는 적합 |

| NVIDIA RTX 4060 Ti 8 GB | 8 GB | 프로덕션 GPU 렌더링에는 VRAM 부족 |

| AMD Radeon RX 7900 XTX | 24 GB | 렌더링 엔진 지원 제한 (HIP/Cycles만 지원) |

AMD에 대한 참고 사항: Radeon 7900 XTX는 매력적인 가격에 24 GB를 제공하지만, 렌더링 엔진 지원은 여전히 제한적이에요. Blender Cycles(HIP 경유), ProRender, 그리고 소수의 작은 엔진만 AMD GPU를 지원해요. Redshift, Octane, V-Ray GPU는 NVIDIA 전용(CUDA/OptiX)이에요. 파이프라인이 Blender 중심이라면 AMD도 선택지가 될 수 있어요. 그 외의 경우, NVIDIA는 2026년 GPU 렌더링에서 여전히 실질적인 선택이에요.

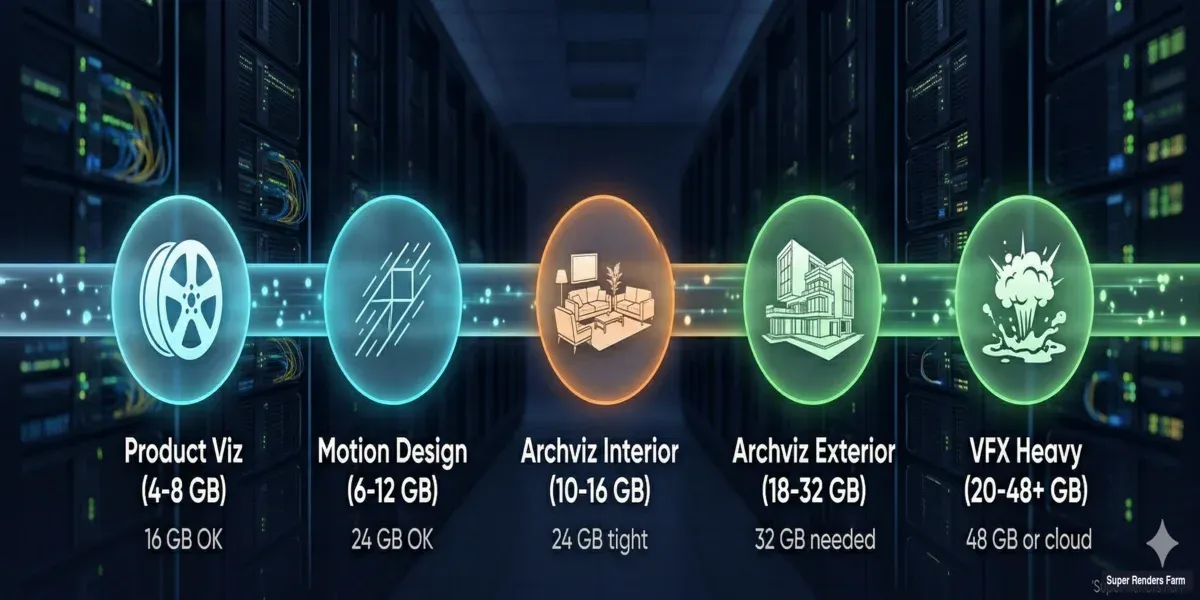

용도별 VRAM 요구 사항

VRAM은 렌더링용 GPU 선택에서 가장 중요한 단일 요소예요. 프로덕션 데이터를 기반으로 각 워크플로가 실제로 요구하는 VRAM을 정리했어요.

| 용도 | 일반적인 VRAM 사용량 | 최소 GPU | 권장 GPU |

|---|---|---|---|

| 제품 시각화 (단일 오브젝트, 스튜디오 조명) | 4~8 GB | RTX 4070 Ti (16 GB) | RTX 4090 (24 GB) |

| 건축 시각화 인테리어 (가구 있는 방, 4K 텍스처) | 10~16 GB | RTX 4090 (24 GB) | RTX 5090 (32 GB) |

| 건축 시각화 익스테리어 (식생, 다수 건물) | 18~32 GB | RTX 5090 (32 GB) | RTX A6000 (48 GB) 또는 클라우드 |

| 모션 디자인 (스타일라이즈드, 중간 규모 지오메트리) | 6~12 GB | RTX 4080 (16 GB) | RTX 4090 (24 GB) |

| VFX (시뮬레이션 캐시, 헤비 디스플레이스먼트) | 20~48+ GB | RTX A6000 (48 GB) | 멀티 GPU 또는 클라우드 |

| 애니메이션 (프레임별, 일관된 씬) | 프레임당 다양 | 씬 최대 VRAM에 맞춤 | +25% 여유 공간 |

VRAM 요구 사항 다이어그램 — 제품 시각화, 건축 시각화 인테리어, 익스테리어, VFX 렌더링에 필요한 GPU 메모리 표시

실제로 VRAM을 소모하는 요소:

| 에셋 유형 | 대략적인 VRAM 비용 |

|---|---|

| 4K 텍스처 (GPU 압축) | 16~32 MB |

| 4K 텍스처 (비압축) | 64 MB |

| 폴리곤 100만 개 | 40~80 MB |

| 디스플레이스먼트 맵 (촘촘한 서브디비전) | 오브젝트당 200~500 MB |

| 볼류메트릭 캐시 (연기/불) | 500 MB ~ 4 GB |

| Forest Pack / 스캐터 (1,000만 인스턴스) | 2~8 GB |

| HDRI 환경 (8K) | 128~256 MB |

4K 텍스처 80장(압축), 폴리곤 500만 개, 디스플레이스먼트 오브젝트 2개, 8K HDRI를 포함한 씬은 렌더링 엔진 자체 오버헤드(BVH 구조, 라이트 캐시, 디노이저 버퍼) 추가 전에 약 610 GB를 사용해요. 16 GB에서는 처리 가능하죠. 여기에 인스턴스 500만 개의 Forest Pack 식생을 추가하면 1520 GB에 달해요. 갑자기 16 GB 카드는 실패하고 최소 24 GB가 필요해져요.

VRAM 한계가 복잡한 씬에 미치는 영향에 대한 상세 분석은 RTX 5090 VRAM 한계 분석을 참고해요.

렌더링 엔진 GPU 호환성 (2026)

모든 GPU가 모든 렌더링 엔진에서 작동하는 것은 아니에요. 이 표는 현재 프로덕션 호환성을 반영해요.

| 렌더링 엔진 | NVIDIA CUDA | NVIDIA OptiX (RT 코어) | AMD HIP | Intel Arc | 멀티 GPU | Out-of-Core (RAM 폴백) |

|---|---|---|---|---|---|---|

| Redshift 3.6+ | 완전 지원 | 완전 지원 | 미지원 | 미지원 | 지원 (선형 확장) | 지원 (속도 저하 있음) |

| Octane 2024+ | 완전 지원 | 완전 지원 | 미지원 | 미지원 | 지원 | 제한적 |

| V-Ray GPU 7 | 완전 지원 | 완전 지원 | 미지원 | 미지원 | 지원 | 하이브리드 CPU+GPU 모드 |

| Arnold GPU 7.3+ | 완전 지원 | 완전 지원 | 미지원 | 미지원 | 지원 | 통합 메모리 모델 |

| Cycles (Blender 4.x) | 완전 지원 | 완전 지원 | 완전 지원 (HIP) | 부분 지원 (oneAPI) | 지원 | 미지원 |

| Unreal Engine 5.4+ (Path Tracer) | 완전 지원 | 완전 지원 | 미지원 | 미지원 | 제한적 | 미지원 |

| D5 Render | 완전 지원 | 완전 지원 | 미지원 | 미지원 | 미지원 | 미지원 |

| Enscape | 완전 지원 | 완전 지원 | 미지원 | 미지원 | 미지원 | 미지원 |

주요 관찰 사항:

-

NVIDIA 우위는 구조적이에요. 모든 주요 GPU 렌더링 엔진이 CUDA와 OptiX를 지원해요. AMD 지원은 사실상 프로덕션 렌더링에서 Blender 전용이에요. 엔진 개발자들은 실제 사용자가 보유한 하드웨어를 우선시하기 때문에 이 상황은 쉽게 바뀌지 않을 거예요. (저희는 Redshift의 공식 Maxon 렌더 파트너이자 V-Ray의 공식 Chaos 렌더 파트너로, 두 엔진 모두 GPU 플릿에서 매일 운영하고 있어요.)

-

OptiX는 중요해요. OptiX를 통한 RT 코어 가속화는 지원 엔진에서 순수 CUDA 대비 20~40%의 속도 향상을 제공해요. 모든 RTX 카드(20 시리즈 이상)에 RT 코어가 있지만, 최신 세대일수록 더 강력해요. RTX 5090의 4세대 RT 코어는 무거운 레이 트레이싱 씬에서 측정 가능한 개선을 보여요.

-

멀티 GPU 확장성은 다양해요. Redshift는 거의 선형적으로 확장돼요(GPU 2개로 1.8

1.9배). Octane은 최종 렌더에서는 잘 확장되지만 뷰포트에서는 그렇지 않아요. V-Ray GPU와 Arnold GPU는 멀티 GPU를 지원하지만 대부분의 워크로드에서 카드 2개를 넘어서면 수확 체감이 발생해요. 24개 GPU를 초과하여 확장하려면 클라우드 렌더링이 더 실용적이에요. PCIe 대역폭 병목, 전력 제약, 초기 투자 비용을 피할 수 있거든요. -

Out-of-Core는 안전망이지 워크플로가 아니에요. Redshift의 Out-of-Core 렌더링은 씬이 VRAM을 초과할 때 크래시를 방지하지만, 성능이 3~8배 저하돼요. Out-of-Core 기능을 기준으로 GPU를 선택하지 마세요. 일반적인 씬이 VRAM에 맞도록 크기를 결정하세요.

벤치마크 비교: 실제 렌더링 성능

이 벤치마크는 표준화된 씬을 사용하여 원시 렌더링 처리량을 비교해요. 모든 수치는 공개 벤치마크 스위트(Blender Benchmark, OctaneBench, Redshift 벤더 데이터)와 저희 내부 테스트를 결합한 결과예요.

| GPU | Blender Classroom (samples/min) | OctaneBench 2024 | Redshift (건축 시각화 인테리어, 상대값) | V-Ray GPU (V-Ray Benchmark, vraymarks) |

|---|---|---|---|---|

| RTX 5090 | 1,850 | 982 | 1.00x (기준) | 3,420 |

| RTX 4090 | 1,420 | 756 | 0.77x | 2,640 |

| RTX 5080 | 1,050 | 548 | 0.57x | 1,920 |

| RTX 4080 SUPER | 980 | 512 | 0.53x | 1,810 |

| RTX 3090 Ti | 920 | 482 | 0.50x | 1,680 |

| RTX 3090 | 870 | 458 | 0.47x | 1,590 |

| RTX A6000 | 780 | 412 | 0.42x | 1,440 |

| RTX 4070 Ti SUPER | 740 | 392 | 0.40x | 1,380 |

이 수치에 대한 중요한 맥락:

- 벤치마크는 VRAM에 맞는 씬에서의 컴퓨팅 속도를 측정해요. 실제 씬이 VRAM에 맞는지는 알려주지 않아요.

- RTX A6000은 원시 컴퓨팅에서 컨슈머 카드보다 낮은 점수를 받지만, 이 목록의 다른 모든 카드에서 크래시가 발생하는 씬을 렌더링할 수 있어요. VRAM 용량은 벤치마크에 나타나지 않아요.

- RTX 5090의 4090 대비 30% 향상은 엔진 전반에 걸쳐 일관되게 나타나요. 이는 엔진별 최적화가 아닌 아키텍처 개선임을 시사해요.

- 실제 프로덕션 성능은 벤치마크와 상당히 다를 수 있어요. 디스플레이스먼트가 많은 씬은 RT 코어를 더 많이 사용하고, 복잡한 셰이더가 있는 씬은 CUDA 코어를, 텍스처가 많은 씬은 메모리 대역폭을 더 많이 사용해요.

클라우드 GPU 렌더링 vs. 하드웨어 구매

어느 시점에서는 필요한 GPU가 소유하기에 합리적인 비용을 초과하거나, 마감 기한에 단일 워크스테이션이 제공할 수 있는 것보다 더 많은 렌더링 파워가 필요해요. 바로 이때 클라우드 GPU 렌더링이 등장해요.

구매가 합리적인 경우:

- 매일 렌더링하고 GPU를 하루 4시간 이상 활용할 수 있는 경우

- 씬이 단일 GPU의 VRAM에 편안하게 맞는 경우

- 즉각적인 접근이 중요한 경우 (업로드 시간 없음, 대기열 없음)

- 워크스테이션 GPU당 $1,500-5,000의 초기 예산이 있는 경우

클라우드 GPU 렌더링이 합리적인 경우:

- 마감 기한에 많은 GPU에 걸쳐 병렬 렌더링이 필요한 경우

- 씬이 로컬 GPU의 VRAM을 초과하는 경우 (클라우드 팜은 더 높은 VRAM 옵션 제공)

- 렌더링이 간헐적인 경우 (마감 시 집중, 평소에는 유휴 상태)

- 자본 지출 없이 최신 세대 하드웨어에 접근이 필요한 경우

- 사용 패턴에 따라 총 소유 비용 분석이 클라우드를 선호하는 경우

저희 팜에서는 GPU 렌더링 작업에 RTX 5090 GPU (32 GB VRAM)를 운영하고 있어요. 씬이 24 GB — 로컬 RTX 4090의 한계 — 를 초과하는 아티스트에게 32 GB 카드로 클라우드 렌더링은 $4,000+ A6000 없이도 여유 공간을 제공해요. 하드웨어 감가상각, 전력 비용, 혼잡 기간 동안 수십 개의 GPU로 확장할 수 있는 유연성을 고려하면 경제적으로 타당해요.

저희가 가장 성공적인 스튜디오들에서 보는 하이브리드 접근 방식: 일상적인 룩데브와 이터레이션을 위한 유능한 로컬 GPU(RTX 4090 또는 5090)와 최종 프로덕션 프레임 및 마감 집중 기간을 위한 클라우드 렌더링의 조합이에요. 창작 작업 중에는 즉각적인 피드백을, 처리량이 필요할 때는 버스트 용량을 제공해요.

예산 및 용도별 추천

$1,000 미만 — 학습 및 경량 프로덕션

선택: RTX 3090 (중고, $700-850) 또는 RTX 4070 Ti SUPER ($799)

속도보다 VRAM이 더 중요하다면 (렌더링에서는 보통 그렇죠): 중고 RTX 3090을 선택하세요. 24 GB로 중간 규모의 프로덕션 씬에서 메모리 한계에 부딪히지 않아요. 보증이 있는 새 하드웨어를 선호한다면: 16 GB의 4070 Ti SUPER가 제품 시각화와 모션 디자인을 편안하게 처리해요.

$1,000~$1,800 — 본격적인 프로덕션

선택: RTX 4090 (~$1,599-1,799)

RTX 4090은 2026년 대부분의 전문 3D 렌더링 워크플로를 위한 단일 카드 추천으로 여전히 유효해요. 24 GB로 대부분의 프로덕션 씬을 처리하며, 컴퓨팅 성능은 RTX 5090의 25~30% 이내로, $400-600 더 저렴해요. 특별히 32 GB가 필요하거나 이미 4090을 보유하고 있지 않다면 여기에 가치가 있어요.

$1,800~$2,500 — 최대 단일 카드 성능

선택: RTX 5090 (~$1,999)

24 GB로는 부족하지만 A6000을 위한 $4,000+는 정당화되지 않을 때. 32 GB GDDR7은 24 GB 카드에서 넘치는 조밀한 건축 시각화 인테리어, 적당한 식생 씬, VFX 샷을 처리해요. 저희가 GPU 렌더 노드에서 사용하는 카드예요. 32 GB VRAM과 현재 세대 컴퓨팅의 조합이 가장 넓은 범위의 프로덕션 시나리오를 커버해요.

$4,000+ — 최대 VRAM

선택: RTX A6000 (48 GB, ~$4,400)

32 GB를 초과하는 씬을 정기적으로 작업할 때만 선택하세요. 볼류메트릭 시뮬레이션이 있는 헤비 VFX, 완전한 식생이 있는 조밀한 도시 환경, 또는 단순히 컨슈머 하드웨어에 맞지 않는 멀티 에셋 컴포지션이 여기에 해당해요. 이 가격대에서는 클라우드 렌더링을 대안으로 고려해 보세요. A6000에 대한 자본 투자로 상당한 클라우드 렌더링 크레딧을 구매할 수 있어요.

FAQ

Q: 2026년 3D 렌더링을 위한 최적의 GPU는 무엇인가요? A: NVIDIA RTX 5090 (32 GB VRAM)은 전문 3D 작업을 위한 렌더링 속도와 메모리 용량의 가장 강력한 조합을 제공해요. RTX 4090 (24 GB)은 대부분의 워크플로에 탁월한 가성비를 보여요. 선택은 주로 씬이 24 GB VRAM을 초과하는지 여부에 달려 있어요.

Q: GPU 렌더링에 VRAM이 얼마나 필요한가요? A: 제품 시각화와 모션 디자인에는 16 GB로 충분해요. 4K 텍스처가 있는 건축 시각화 인테리어에는 24 GB가 충분한 여유 공간을 제공해요. 식생이 있는 건축 시각화 익스테리어나 시뮬레이션 데이터가 있는 VFX에는 32~48 GB가 필요한 경우가 많아요. 실제 요구 사항은 씬의 텍스처 수, 폴리곤 밀도, 디스플레이스먼트 복잡도에 따라 결정돼요.

Q: Redshift가 AMD GPU에서 작동하나요? A: 아니요. Redshift는 NVIDIA GPU(CUDA/OptiX)가 필요해요. Octane과 V-Ray GPU도 마찬가지예요. 주요 렌더링 엔진 중에서는 Blender Cycles만 HIP를 통해 AMD GPU를 지원해요. 파이프라인이 Redshift, Octane, 또는 V-Ray GPU를 사용한다면 NVIDIA 하드웨어가 필요해요.

Q: 렌더링을 위해 RTX 4090에서 RTX 5090으로 업그레이드할 가치가 있나요? A: RTX 5090은 약 30% 빠른 렌더링과 33% 더 많은 VRAM(32 GB vs 24 GB)을 제공해요. 씬이 정기적으로 20~24 GB의 VRAM을 사용하고 메모리 한계에 부딪힌다면 업그레이드는 즉시 정당화돼요. 씬이 20 GB 이하에 편안하게 맞는다면 4090은 여전히 매우 유능하며, 30%의 속도 향상이 비용 차이를 정당화하지 못할 수 있어요. 자세한 벤치마크는 RTX 5090 렌더링 성능 분석을 참고해요.

Q: 렌더링에 멀티 GPU를 사용할 수 있나요? A: 네, 대부분의 GPU 렌더링 엔진이 멀티 GPU 구성을 지원해요. Redshift는 거의 선형적으로 확장돼요(GPU 2개로 1.8~1.9배). Octane과 V-Ray GPU도 여러 카드를 지원해요. VRAM은 GPU 간에 공유되지 않아요. 각 카드가 씬 데이터를 독립적으로 보유해야 해요. 멀티 GPU는 속도를 향상시키지만 VRAM 한계를 해결하지는 못해요.

Q: 렌더링에 워크스테이션 GPU(Quadro/RTX A-시리즈)가 필요한가요? A: 렌더링 성능을 위해서는 필요하지 않아요. 컨슈머 RTX 카드(4090, 5090)는 패스 트레이싱 워크로드에서 워크스테이션 동급 카드보다 빠르고 저렴해요. 워크스테이션 카드(A6000)는 더 많은 VRAM(48 GB)이 필요하거나, 특정 CAD/DCC 애플리케이션을 위한 인증 드라이버가 필요하거나, 시뮬레이션 워크로드를 위한 ECC 메모리가 필요할 때만 프리미엄이 정당화돼요. 순수 렌더링을 위해서는 컨슈머 카드가 달러당 더 높은 성능을 제공해요.

Q: GPU를 구매하는 대신 클라우드 GPU 렌더링을 언제 사용해야 하나요? A: 클라우드 GPU 렌더링이 합리적인 경우: 마감 기한에 보유한 GPU보다 더 많은 GPU가 필요할 때, 씬이 로컬 GPU의 VRAM을 초과할 때, 렌더링 워크로드가 지속적이지 않고 간헐적일 때, 또는 사용 패턴에 따라 총 소유 비용(하드웨어 + 전력 + 감가상각)이 클라우드 크레딧을 초과할 때예요. 많은 스튜디오가 일상적인 이터레이션을 위한 로컬 GPU와 최종 프로덕션 출력을 위한 클라우드 렌더링을 결합해요.

About Alice Harper

Blender and V-Ray specialist. Passionate about optimizing render workflows, sharing tips, and educating the 3D community to achieve photorealistic results faster.