Is a Single RTX 5090 Worth It for Rendering? A Solo Artist's Cost Breakdown for 2026

Resumen

Introduction

Every few weeks, a freelancer or a 1-2 person motion design boutique asks us the same question: "I'm thinking about buying an RTX 5090 just for my final renders. Is it worth it, or should I keep using cloud?" The math behind that decision is genuinely different from the math we've published before for studio-scale render farms — a 12-person archviz team with a 10-node CPU farm operates in a different cost regime than a solo Cinema 4D artist deciding whether to drop $3,500 on a single GPU.

We've been operating Super Renders Farm for 15+ years and we've watched a steady stream of indie artists make this purchase decision. Some come back to cloud after six months because the workstation kept getting locked rendering instead of being available for the actual creative work. Others build a 5090 rig and never look back because their volume genuinely justifies it. The pattern that decides which group you fall into isn't price — it's hours rendered per month and the value of your time during those hours.

This article walks through the cost of owning one RTX 5090 workstation against the cost of paying per-hour for cloud rendering, specifically at indie scale. Real numbers from current 2026 retail prices, real US electricity rates, and the operational realities we see freelancers run into. The break-even threshold for a single workstation is meaningfully lower than what we published in our studio-scale build vs cloud total cost analysis — about 150 monthly render hours instead of 400+, because solo operators don't carry the same IT overhead. But the opportunity cost flips the math hard for anyone billing client work.

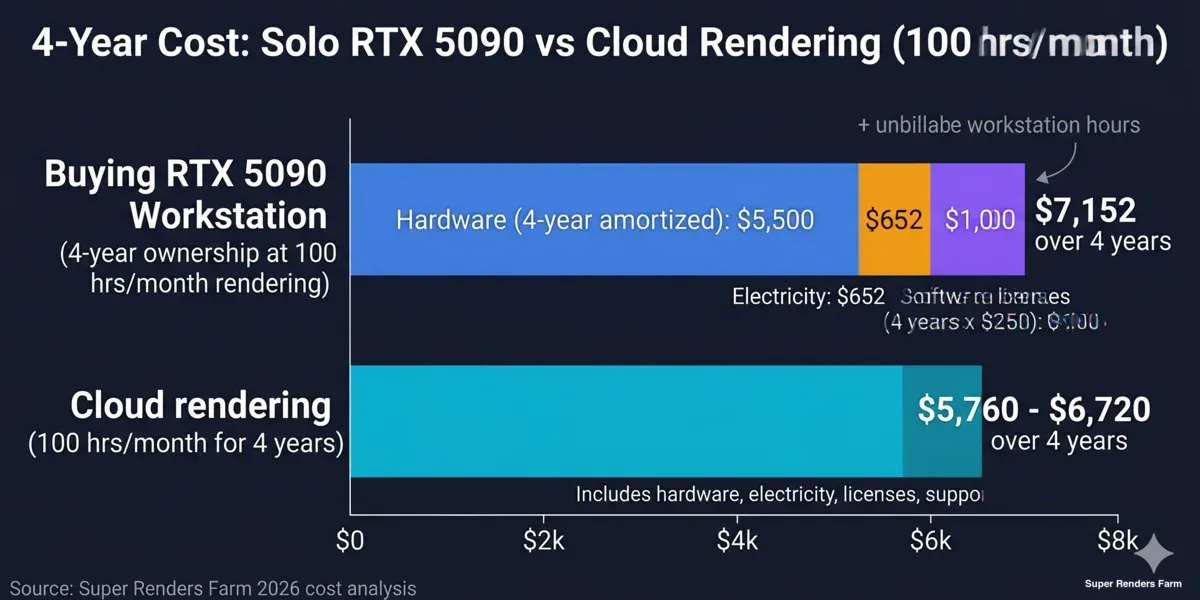

Four-year cost comparison: solo RTX 5090 workstation versus cloud rendering at 100 hours per month

What an RTX 5090 Actually Delivers in 2026

Before running cost numbers, it's worth being precise about what you're buying. The NVIDIA RTX 5090 shipped at a $1,999 MSRP in early 2025, but actual retail in April 2026 sits at $2,500 to $3,800 due to GDDR7 memory shortages and persistent AI-compute demand pulling supply away from consumer channels. Liquid-cooled or premium custom variants run past $5,000.

The card delivers 32 GB of GDDR7 VRAM — the meaningful spec for production GPU rendering. That's enough headroom for most archviz interiors, motion design assets, and product visualization scenes without resorting to out-of-core rendering. For VFX-scale environments or hero CG-character pipelines with full displacement and 8K textures, 32 GB still has limits — see our RTX 5090 VRAM limits for complex scenes breakdown for the workloads where it does and doesn't hold up.

In Redshift, Octane, V-Ray GPU, and Cycles workloads, an RTX 5090 delivers roughly 1.6-2.0× the throughput of an RTX 4090 in our GPU benchmark testing on the same scenes across both cards. It's a real generational improvement, not a marketing one. The card also draws 575W TDP for the GPU alone, which matters for a single-workstation build because the rest of the system bumps real-world full-render power draw to 750-900W under sustained load.

What it doesn't deliver: relief from the workstation-availability problem we describe below. A single 5090 rendering at full tilt is also a single 5090 unavailable for viewport interaction, lookdev, simulation caching, or any of the other GPU-bound tasks a modern 3D pipeline runs alongside rendering.

The Full Cost of Buying a 5090 Workstation

Building a complete RTX 5090 workstation in 2026 means more than the GPU sticker price. Here's a realistic 2026 component list for a workstation that won't bottleneck the GPU:

| Component | Cost Range |

|---|---|

| RTX 5090 (32 GB GDDR7) | $2,500-$3,800 |

| CPU (Ryzen 9 9950X or Intel Core Ultra 9) | $550-$700 |

| Motherboard (high-quality X870/Z890) | $300-$500 |

| 64 GB DDR5-6000 ECC RAM | $300-$450 |

| 2 TB NVMe Gen 5 SSD | $200-$300 |

| 1200W 80+ Platinum PSU | $200-$300 |

| Case + airflow + AIO cooling | $250-$400 |

| Total system | $4,300-$6,450 |

That's the day-one capital cost. Some artists already own most of this and just need the GPU — in which case the marginal cost is $2,500-$3,800. Others are upgrading from a gaming machine that won't handle a 575W card cleanly and need a full rebuild including the PSU and cooling pathway, pushing toward the upper bound.

Hardware depreciates the moment it's powered on. We see GPU rendering cards lose roughly 25% of their resale value annually, with practical production utility extending 3-4 years before performance falls meaningfully behind whatever ships next. An RTX 4090 purchased in late 2022 for $1,600 sells used today at around $700-$900 and runs Redshift scenes at roughly half the speed of a current 5090. By 2029, a 5090 will likely be in the same position relative to whatever Blackwell-successor architecture replaces it.

Amortized over 4 years, a $5,500 system costs $1,375 per year in capital alone — before electricity, software, or any of the operational factors below.

Hidden Costs Solo Artists Consistently Underestimate

The studio-scale build vs cloud article focuses on IT labor, license servers, and version coordination across multiple nodes. None of that applies to a solo workstation. But three other costs do — and they're the ones freelancers usually leave out of their spreadsheets.

Electricity at the wall, not at the GPU

A 5090 GPU under sustained Redshift or Octane load draws 575W. The full system — CPU at boost clocks, NVMe at peak, fans at 100% — pulls 750-900W from the wall. At the US average residential electricity rate of roughly $0.17/kWh, 100 monthly render hours at 800W draws 80 kWh, costing about $13.60 per month or $163 per year.

That's modest in isolation. But render hours typically come in bursts before deadlines, often overnight, and the workstation is also drawing baseline power for desktop work the rest of the day. For artists working from a home studio in California, Massachusetts, or Hawaii — where rates run $0.30-$0.45/kWh — render-only annual electricity for 100 monthly hours runs $290-$430 instead. The opportunity cost we describe next swamps this number, but it's still real money.

Software licenses still apply (and the math works against solo)

GPU render engines charge for farm use whether you have ten nodes or one. Annual single-seat licensing in 2026:

- Redshift: $264/year individual, $299/year Teams (3-seat minimum, not solo-friendly)

- Octane: approximately $240/year on the Octane Studio+ tier with the renderer included (pricing varies by tier and seat count)

- V-Ray (GPU): $208/year for the standard single-machine license

- Cycles (Blender): free, but production work usually needs a paid render manager or third-party plugin

A solo artist running one engine pays $208-$264 per year in licensing. An artist running two engines for client variety (we see this often with motion designers using both Redshift and Octane) pays $450-$500. None of this scales away — it's recurring annual cost regardless of how many hours you render.

Cloud rendering on a fully managed platform includes these licenses in the per-hour rate. For studios comparing options, this is a meaningful equation; for a freelancer doing 50-150 hours per month, the bundled licensing eliminates one fixed annual line item entirely.

Opportunity cost — the one freelancers don't put in their spreadsheet

Here's the cost almost no indie artist accounts for: when your workstation is locked rendering, you can't model, texture, animate, comp, or do client revisions on it. For a solo operator with one machine, render time directly competes with billable work time.

A freelancer billing $50-$100 per hour of client work who locks a workstation for 8 hours of overnight rendering doesn't lose money on those overnight hours — they were going to sleep anyway. But locking the same workstation for 8 daytime hours during a deadline crunch means choosing between revisions and renders. The render gets queued, the client gets a slower turnaround, and the artist either works late or pushes the deadline.

We've watched this play out in real freelancer workflows. The artists who treat their workstation as "render machine first, creative tool second" eventually report the same complaint: their iteration speed dropped, their client communication suffered, and the hardware they bought to be productive ended up being a constraint on productivity. The artists who treat the workstation as "creative tool first, render machine secondarily" tend to send heavier renders to cloud and keep the local hardware for interactive work.

There's no clean dollar value to attach to opportunity cost — it depends on your hourly billing rate and how often render contention actually blocks revisions. But ignoring it entirely is the most common mistake we see in solo build-vs-cloud spreadsheets.

What Cloud Rendering Actually Costs at Indie Scale

Cloud rendering pricing models vary widely. On a fully managed cloud render farm — where licenses, software installation, and job management are bundled into the per-hour rate — indie-scale costs are predictable.

Super Renders Farm's published rates are $0.004/GHz-hour for CPU rendering and $0.003/OB-hour for GPU rendering, with volume discounts that drop the effective rate 20-40% for higher-usage tiers. New users start with $25 in free credits that don't expire. Rendering 100 GPU hours per month (typical for a motion designer pushing 4K animation work) lands roughly at $1,440-$1,680 per year after volume tiers — equivalent to what one and a half Octane Studio+ subscriptions cost. For details on how per-hour pricing models compare against subscription, per-frame, and credit-based alternatives, see our render farm pricing models comparison and the per-frame breakdown in our cloud rendering cost-per-frame guide.

The cost varies by usage scale:

| Monthly Render Hours | Annual Cloud Cost (after typical volume discount) |

|---|---|

| 25 hours/month | $360-$480 |

| 50 hours/month | $720-$840 |

| 100 hours/month | $1,440-$1,680 |

| 150 hours/month | $2,160-$2,520 |

| 200 hours/month | $2,880-$3,360 |

These figures cover the full cost — render time, render-engine licenses, infrastructure, support. There's no separate license fee, no PSU upgrade, no thermal throttling at 4 AM during a heat wave.

Cloud rendering at indie scale also avoids the IaaS trap. Pay-per-hour rates from infrastructure providers like Runpod or Vast.ai look lower per hour, but the artist absorbs all the operational overhead — installing render engines, managing licenses, troubleshooting failed jobs, configuring shared storage. We dig into this distinction in our managed vs DIY cloud rendering comparison; the short version is that "$1.50/hour IaaS plus $400/year licensing plus 5 hours/month of setup" usually exceeds the cost of a fully managed rate that bundles everything.

Break-Even Math for One Workstation

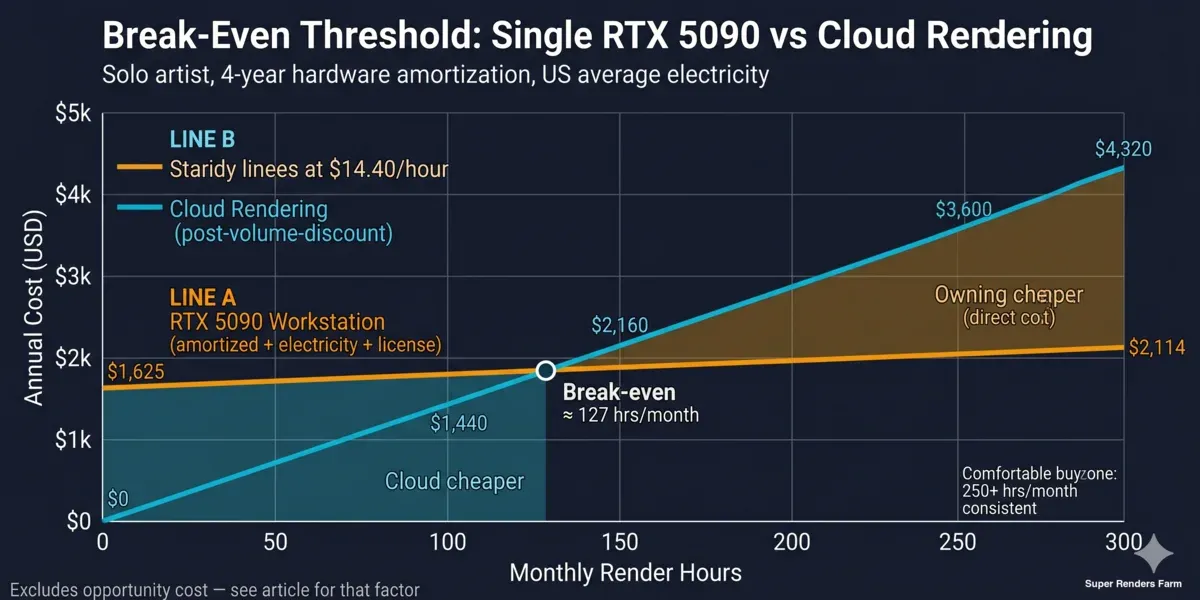

For studio-scale farms with 10+ nodes, the break-even point against cloud rendering sits around 400 consistent monthly render hours. For a single workstation without dedicated IT staff, the math is much more favorable to ownership — somewhere around 150 hours per month, depending on how you account for opportunity cost.

Here's the calculation, ignoring opportunity cost (i.e., assuming the workstation is rendering during hours you weren't doing anything else):

- Annual cloud cost at H hours/month = $14.40 × H (post-volume-discount)

- Annual workstation cost at H hours/month = $1,375 (amortized hardware) + $1.63 × H (electricity at home rates) + $250 (single-engine license) = $1,625 + $1.63H

Setting these equal: $1,625 + $1.63H = $14.40H, which gives H ≈ 127 hours/month. Round up to ~150 hours/month for a comfortable buffer that accounts for higher electricity rates in some US regions and PSU/cooling refresh costs over the 4-year amortization period.

Below 150 hours/month, cloud is cheaper on direct cost alone. Above 250 hours/month consistently, owning a 5090 starts paying off even before you account for the productivity benefit of having a fast local GPU for viewport work. Between 150 and 250 hours/month is the gray zone where opportunity cost decides — if your render hours regularly fall during billable working hours, cloud usually wins; if they're consistently overnight or weekend batch jobs while you sleep, the workstation makes sense.

Break-even threshold chart for single RTX 5090 workstation versus cloud rendering by monthly render hours

The studio break-even at 400 hours/month is higher because studios carry IT labor cost ($13,000-$26,000/year) that solo operators simply don't. A freelancer is their own sysadmin, and the time spent troubleshooting a driver update is real but not billable to a separate cost line. That's the structural difference between solo and studio TCO math.

The Hybrid Model: Local for Interactive, Cloud for Final

The most common pattern we see among productive indie artists isn't "all cloud" or "all local" — it's a hybrid that uses each tool for what it's actually good at.

Local 5090 (or any capable GPU) for:

- Real-time viewport navigation and lookdev

- Lighting iteration with progressive renderers

- Test renders at draft resolution and sample counts

- Simulation caching (fluids, particles, cloth)

- Compositing, color grading, motion graphics in After Effects or Nuke

- Game engines, real-time tools (Unreal, Unity), texturing in Substance

Cloud rendering for:

- Production-resolution final frames

- Animation sequences (workstation can't be locked for 12+ hours)

- High-sample-count quality renders for delivery

- Deadline crunches when local hardware would otherwise bottleneck revisions

- Heavy archviz interiors with full ray-traced GI and 4K-8K resolution

- Multi-camera or multi-pass renders

This split keeps the workstation's fast GPU available for the interactive work where latency matters and pushes the long-tail rendering — the work where wall-clock time is what matters, not interaction speed — to infrastructure that doesn't compete with creative tools for resources. It's also resilient: a hardware failure on the workstation doesn't kill production deadlines, because the cloud farm continues rendering. We've seen freelancers swap dead PSUs in a Friday afternoon while their final delivery rendered on our farm overnight without missing the Monday client meeting.

The economics of a hybrid setup are genuinely friendly to solo artists — the workstation gets used for the high-value interactive hours and the cloud absorbs the unpredictable peak-volume hours. Neither tool sits idle, and neither carries the entire load.

When Buying a 5090 Still Makes Sense for Indies

The cost analysis points toward cloud rendering for most freelancers under 150 hours per month — but cost isn't the only factor. There are real scenarios where buying a 5090 still makes sense for solo operators:

- Real-time and viewport-bound workflows. Unreal Engine, Unity, Substance Painter, ZBrush, Nuke — these tools benefit from a local high-end GPU regardless of whether you ever batch-render with it. If your daily creative work is GPU-intensive interactive software, a 5090 is a workstation upgrade, not a render farm in disguise.

- Predictable high-volume rendering. If you render 250+ hours per month every month — common for animators producing weekly content or motion designers with steady client volume — the workstation pencils out within 3 years even before opportunity cost adjustments.

- Latency-sensitive iteration. Lookdev passes, lighting iterations, and shader debugging benefit from sub-second feedback that network round-trips to a cloud farm can't match. If your workflow is dominated by these passes, local hardware wins.

- Gaming or content creation dual-use. A 5090 is the same card whether it's running V-Ray GPU at 2 AM or Cyberpunk 2077 at 7 PM. If half the purchase justification is non-rendering work, the cost calculation changes — you're not buying a render machine, you're buying a workstation that also renders.

- Air-gap or NDA-heavy work. Some clients require all render data stay on-premises. Cloud is structurally a non-starter for these projects, regardless of cost. We've seen this in defense visualization, certain studio projects, and proprietary product launches under embargo.

If three or more of these factors apply to your work, owning a 5090 is the right answer. If only one or two apply, the hybrid model below is usually a better fit.

Decision Framework for Solo Artists

| Factor | 5090 Purchase Favored | Cloud Rendering Favored |

|---|---|---|

| Monthly render hours | 250+ consistently | Under 150 or variable |

| Workflow type | GPU-intensive interactive (lookdev, real-time) | Final-frame batch rendering |

| Render timing | Overnight/weekend (no contention) | Daytime working hours (contention) |

| Client billing rate | Lower hourly rate (opportunity cost minor) | Higher rate (opportunity cost dominant) |

| Hardware budget | $4,500+ available as capex | Prefer per-project opex |

| Software stack | Single render engine | Multiple engines (license stacking) |

| Electricity rates | Low US-average ($0.10-$0.17/kWh) | High coastal rates ($0.30+/kWh) |

| Existing setup | Already own most of the system | Starting from a laptop or older desktop |

| Job continuity risk | Acceptable to delay during hardware failures | Deadline-driven, no acceptable downtime |

If most of your row reads "Cloud Rendering Favored," start with cloud and see if your render hours grow into the buy-zone over 6-12 months. If most reads "5090 Purchase Favored," buying makes sense.

FAQ

Q: Is buying a single RTX 5090 worth it just for 3D rendering? A: For solo artists rendering under 150 hours per month, the cost math favors cloud rendering on direct expenses alone — and the gap widens once you factor in the opportunity cost of a workstation that's locked rendering during billable hours. For artists rendering 250+ consistent hours per month, or whose daily workflow is GPU-intensive interactive work, the 5090 pays back within 3-4 years even when used purely for rendering.

Q: Can I use my 5090 for both gaming and rendering without compromising either? A: Yes — a 5090 is the same hardware regardless of workload, and modern drivers handle the context switch between gaming and production renderers cleanly. The practical caveat is that a long batch render and a gaming session can't run simultaneously; a 5090 fully loaded for a Redshift queue at 575W is unavailable for anything else. If gaming and rendering happen at different times of day, dual-use works well; if they need to overlap, you need either two GPUs or cloud rendering for the production work.

Q: How long until a 5090 pays for itself for a freelancer? A: At 100 monthly render hours, owning a 5090 costs roughly $1,625 per year (amortized hardware plus electricity plus single-engine license) versus $1,440-$1,680 per year for equivalent cloud rendering. Direct payback at that volume is essentially neutral over 4 years. Below 100 hours per month, the 5090 never pays back on rendering alone; above 200 hours per month, payback compresses to 2-3 years. Adding the workstation's value for non-rendering interactive work shortens payback regardless of render volume.

Q: What about buying a used RTX 4090 instead of a new 5090? A: A used RTX 4090 in good condition runs $700-$1,100 in 2026 and delivers roughly 50-65% of the 5090's GPU rendering throughput. For artists already using cloud for production renders and just wanting a strong interactive GPU, a used 4090 is often a better dollar-per-frame purchase than a new 5090 — particularly because the 24 GB VRAM still handles most archviz and motion design scenes without out-of-core penalties. We see plenty of working freelancers running 4090s as primary workstation GPUs and using cloud for finals.

Q: Will my electricity bill spike noticeably if I run a 5090 for rendering at home? A: For 100 monthly render hours at the US residential average of $0.17/kWh, expect about $13-$15 per month in marginal electricity cost — roughly the price of one streaming subscription. In high-rate regions (Hawaii, California, Massachusetts) at $0.30-$0.45/kWh, the same usage runs $25-$45 per month. The bigger concern in a home studio isn't the bill — it's heat dissipation. A 5090 under sustained render load adds 600-800W of heat to the room and will raise summertime AC load noticeably in a small office.

Q: Do I need a professional card like the RTX 6000 Ada instead of a consumer 5090? A: For nearly all archviz, motion design, VFX shot work, and product visualization, no — consumer GeForce cards run all major production renderers (Redshift, Octane, V-Ray GPU, Cycles, Arnold GPU) without restriction, and the 5090's 32 GB VRAM matches the previous-generation RTX 6000 Ada at a fraction of the price. Professional cards become relevant for ECC memory requirements, certified driver compatibility on specific CAD/CAE software, or VRAM requirements above 32 GB for hero CG production. For 95% of indie 3D rendering, a consumer 5090 is the right purchase.

Q: How does cloud rendering compare to my single 5090 for Redshift or Octane specifically? A: Cloud rendering on a fully managed farm with 5090 GPUs (the same generation as your local card) delivers near-identical per-frame results — same engine, same hardware tier, same drivers. The difference is throughput: a single workstation renders one frame at a time, while cloud rendering parallelizes across many GPUs to compress wall-clock time. For interactive lookdev passes, your local 5090 wins on iteration speed; for an animation final at 1,440 frames at 2 minutes each (48 hours of single-GPU time), cloud finishes the same job in 4-6 hours by spreading frames across 8-12 GPUs simultaneously. The right tool depends on whether you're optimizing for interactivity or for total time to delivery.

Q: What's the upgrade cycle — will a 5090 still be worth running in 2028? A: An RTX 5090 purchased in 2026 will likely run production GPU workloads competently through 2029-2030, but its relative throughput will erode as next-generation cards arrive. Based on the historical pattern (3090 in 2022 → roughly half-throughput vs 5090 in 2026), a 5090 in late 2028 will probably deliver 50-65% of whatever the next-gen flagship offers in equivalent workloads. That's not obsolete — plenty of production work still happens on RTX 4090s in 2026 — but if your business model depends on having current-generation rendering speed, expect a refresh cycle around year 3-4. Cloud rendering sidesteps this entirely; the farm refreshes hardware on its own schedule and you always render on whatever the current generation is.

About Thierry Marc

3D Rendering Expert with over 10 years of experience in the industry. Specialized in Maya, Arnold, and high-end technical workflows for film and advertising.