VFX Industry Trends 2026: Real-Time, AI, Gaussian Splatting, OpenUSD, and Cloud Economics

Overview

Introduction

VFX in 2026 looks structurally different from VFX in 2022. Five shifts are reshaping rendering economics simultaneously: real-time engines crossing the final-pixel threshold, AI moving from denoising into the interior of the pipeline, Gaussian Splatting entering production tools as a first-class asset type, OpenUSD maturing into the industry's interchange standard, and cloud rendering becoming the default — not the overflow — for studios outside the top tier.

Over the past few years we've watched these trends arrive piecemeal at Super Renders Farm. They are no longer experimental; they show up in shipped feature work, in published tool releases, and in our own job mix. This piece is an industry-trends survey aimed at studio decision-makers, pipeline TDs, and artists planning where to invest learning time and budget — not a vendor comparison, not a buying guide.

VFX industry trends 2026 — convergence of real-time engines, AI denoising, Gaussian Splatting, OpenUSD, and cloud rendering

The 2026 Industry Snapshot

Market sizing for VFX in 2026 varies widely by scope. Mordor Intelligence values the combined animation and VFX market at roughly USD 220.69 billion in 2026 with an 11.86% CAGR through 2031, but that figure absorbs animation features, animated TV, motion design, and visualization. Pure-VFX estimates are smaller and more contested: Research Nester sizes the visual effects industry at USD 27.59 billion in 2026; narrower-scoped reports come in around USD 13.23 billion. The methodology delta matters because the bigger headline numbers absorb adjacent markets that don't share VFX-specific cost structures.

A more telling figure for studios is the rendering layer specifically. Industry analysts project the 3D rendering market growing from roughly USD 4.30 billion in 2025 to USD 13.92 billion by 2031 (a ~21.6% CAGR), with GPU rendering's share rising from about 55.8% in 2024 to ~60% by 2026. The mix shift is the clearest signal in the data: the rendering layer is growing faster than the VFX market that contains it, and inside that layer the GPU share is the part compounding.

The second variable is post-strike recovery. The 2023 WGA and SAG-AFTRA strikes reduced upstream production volume that flowed downstream into VFX vendor pipelines through most of 2024 and parts of 2025. VFX Voice (the journal of the Visual Effects Society) characterizes entry to 2026 as balancing uncertainty and opportunity — pipelines are filling again, but freelance employment remains uneven. The third variable is mid-tier consolidation: several facilities pivoted into virtual production services or immersive divisions during the trough, changing buyer composition on the render-farm side.

Real-Time Rendering Crosses the Final-Pixel Line

The headline real-time story in 2026 is that the wall between "real-time for previs" and "offline for final pixel" has stopped being a wall. VFX Voice and NAB Show 2025 coverage outlets characterize near real-time rendering as one of the most consequential technological shifts in the industry — alongside AI-assisted image generation — because it changes which work has to be queued and which work can be reviewed live.

Unreal Engine 5, with Nanite virtualized geometry and Lumen global illumination, is the visible end of that shift. The technique isn't new — ILM's StageCraft rendered The Mandalorian in Unreal Engine starting in 2019 to shoot diverse environments on a single soundstage — but in 2026 the same approach is reaching tier-two productions and broadcast work, not just top-of-budget streaming series. NAB Show 2025 coverage (ProductionHUB, VP-Land, Nitro Media Group) put virtual production at the center of the floor for the third consecutive year, with Sony introducing the Ocellus marker-free camera tracking system and Crystal LED off-axis color-compensation tooling that materially reduce the cost of operating an LED volume.

The economic implication is not that offline rendering disappears — it is that the volume of work that qualifies for real-time has expanded. Background plates for in-camera VFX (ICVFX) on LED stages are real-time work. Previs and postvis are real-time work. Increasingly, full hero shots in mid-budget commercials and broadcast pieces are also being delivered out of Unreal Engine 5's Movie Render Queue.

What stays offline in 2026 is photorealistic film-finals work where path-traced light transport, deep-EXR multi-layer output, and per-shot artist iteration cycles remain dominant. V-Ray, Arnold, Redshift, and Karma all continue to evolve on the offline side: V-Ray 7 added ray-traceable Gaussian Splat support (see next section), Karma XPU continues to mature, and Redshift 2026 releases have focused on volumetrics and shader complexity. These engines and the real-time stack are no longer in zero-sum competition; they are layering onto different stages of the pipeline.

On the farm side, real-time growth has not visibly reduced offline render volumes — it has changed their composition. Final-pixel offline jobs skew toward higher-complexity scenes (volumetrics, deep splat-traced compositing, ray-traced hair and cloth) while simpler shots that once justified offline render time are migrating to real-time. The offline render farm in 2026 is a complexity engine, not a volume engine. For infrastructure planning, that means per-frame cost matters more than nodes-per-second throughput — covered in our cost-per-frame guide.

AI Moves Inside the Pipeline

AI in VFX in 2024 was mostly a debate about generative tools at the script and concept stages. AI in VFX in 2026 is a pipeline-interior reality across several categories, each at a different maturity level.

AI denoising is table stakes. NVIDIA OptiX AI Denoiser and Intel Open Image Denoise (OIDN) are integrated across every mainstream production renderer — V-Ray, Arnold, Redshift, Cycles, Karma. Intel OIDN received a Technical Achievement Award from the Academy of Motion Picture Arts and Sciences in 2025, a useful market signal: the technology has reached production scale that meets Academy criteria. Industry reporting and aggregate render-farm benchmarks indicate that scenes that historically required 2,000–4,000 samples for clean output can reach comparable quality at 200–500 samples with AI denoising. On our farm, average render-time reduction on jobs leveraging AI denoising versus equivalent 2024 jobs that converged purely via sample count is in the 40–60% range, depending on engine and scene type. Variance is mostly driven by heavy volumetrics or aggressive depth-of-field, where AI denoisers still leave residual artefacts that require sample-count backup.

Generative AI in previs and concept is the second category. Stable Diffusion XL and Midjourney are now part of pre-production lookdev cycles at multiple studios, used for rapid mood-board iteration before full 3D sculpting. RunwayML and similar tools handle fast client comps. The pipeline integration is still ad-hoc — most studios run generative AI as a parallel track rather than a baked-in stage — but the workflow has stabilized enough that we see it referenced in client briefs we receive.

Image-to-3D and NeRF/Gaussian Splatting reconstruction is the third category — graduated from research curiosity to production tooling, covered in detail in the next section.

AI for rotoscoping, matchmove cleanup, and simulation previews is the most quietly transformative category. These middle-of-pipeline tasks historically consumed disproportionate junior-artist time, and tools that accelerate them shift studio economics because they free hours, not minutes.

What AI in 2026 has not done is replace artist judgment at the lookdev, composition, or directorial review stages. The pattern across studios that adopted these tools earliest is that team sizes have stayed constant or grown slightly while project throughput per artist has gone up. Studios that adopted cloud rendering alongside AI-assisted tools in 2025–2026 reported completing more projects in the same timeframe without reducing headcount.

Gaussian Splatting Enters Production VFX

Gaussian Splatting — and its temporal extension, 4D Gaussian Splatting — moved from research-paper novelty to shipped production tooling faster than most pipeline supervisors expected. The technique encodes a 3D scene as millions of small anisotropic Gaussians rather than polygonal geometry plus textures; the result is photorealistic visual quality at real-time rendering speeds, with scenes routinely rendering at 100+ FPS inside Unreal Engine.

By early 2026, splats have first-class support across the production stack: Nuke 17 ships with native Gaussian Splat support, Houdini 21 includes a technical preview, OpenUSD 26.03 added a first-class schema, and V-Ray 7 can now ray-trace splat data alongside traditional geometry. The compositing and renderer integrations matter because they move splats out of "Unreal-only" virtual-production islands into the broader VFX pipeline.

The production reference point is Framestore's work on Superman (2025), where the studio used 4D Gaussian Splatting to deliver approximately 40 final-pixel shots. That's the first widely reported case where 4D splats handled shots that previously required a combination of traditional photogrammetry, plate work, and CG reconstruction. Other studios have since followed publicly on episodic and ICVFX work.

The market backdrop is supportive. Industry estimates place the global virtual production market at approximately USD 2.9 billion in 2025 with projections toward USD 18.5 billion by 2035, and Gaussian Splatting is widely characterized as a preferred method for creating the photorealistic environments that power LED volume stages. DJI Terra V5.0+ Flagship processes Gaussian Splatting reconstructions at roughly 500 images per hour on workstation hardware; film-quality sets typically require 300–1,000+ images from a DSLR or drone. On-set capture-to-asset time has compressed from days of manual scanning to under an hour for many environments.

For render farms, splats introduce a different cost-per-frame profile. Polygonal scenes scale predictably with sample count and shader complexity; splat-traced scenes have a memory-bandwidth profile closer to volumetric rendering — VRAM matters more, and engines like V-Ray 7 are still rapidly evolving their splat-tracing paths. We treat it operationally as a job class that needs separate benchmarking, not as "just another geometry type."

Gaussian Splatting pipeline — drone or DSLR capture → reconstruction → Unreal Engine real-time playback + V-Ray 7 ray-traced compositing

OpenUSD as the Industry Interchange Standard

Pixar's Universal Scene Description (USD), open-sourced in 2016, has been the slow-burn standard candidate for nearly a decade. In 2026, "candidate" is no longer the right word. The Alliance for OpenUSD (AOUSD), formed by Pixar with Adobe, Apple, Autodesk, and NVIDIA in 2023, is on track to formalize OpenUSD's foundational specifications by late 2025, and SIGGRAPH 2024 demonstrated OpenUSD's expansion beyond traditional media and entertainment into robotics, autonomous-vehicle simulation, and industrial visualization.

The technical update that matters most to render-farm operators is the addition of Vulkan support to OpenUSD's Hydra Storm renderer (announced in OpenUSD 24.08, contributed by Pixar, Autodesk, and Adobe under the AOUSD umbrella per Khronos Group reporting). HgiVulkan brings the Hydra interface to feature parity with the existing OpenGL and Metal backends and extends USD/Hydra to Android mobile devices, opening a broader platform target without sacrificing the existing Mac/Linux/Windows footprint.

What this means practically:

- Asset interchange between DCCs (Maya, Houdini, Cinema 4D, Blender, 3ds Max) is materially less fragile than when relying on FBX or per-DCC export. We see fewer "broken transform" support tickets year-over-year as studios consolidate on USD as the round-trip format.

- Cross-renderer interoperability is improving — Hydra render delegates from V-Ray, Karma, Arnold, Cycles, and others are increasingly viable for both interactive lookdev and batch rendering, though parity with each renderer's native scene description varies engine-by-engine.

- Pipeline tooling investment is shifting from per-DCC importers/exporters to USD-native asset-publishing systems. Studios that built USD infrastructure in 2023–2024 are harvesting operational cost savings; studios that haven't are treating it as a 2026–2027 capex priority.

OpenUSD does not solve every interchange problem in 2026 — texture and material translation across Hydra delegates remains imperfect, and per-renderer extensions create vendor-lock risks that USD's openness was supposed to eliminate. The trend is unambiguous, though: USD is the asset-format consensus the industry didn't quite have ten years ago.

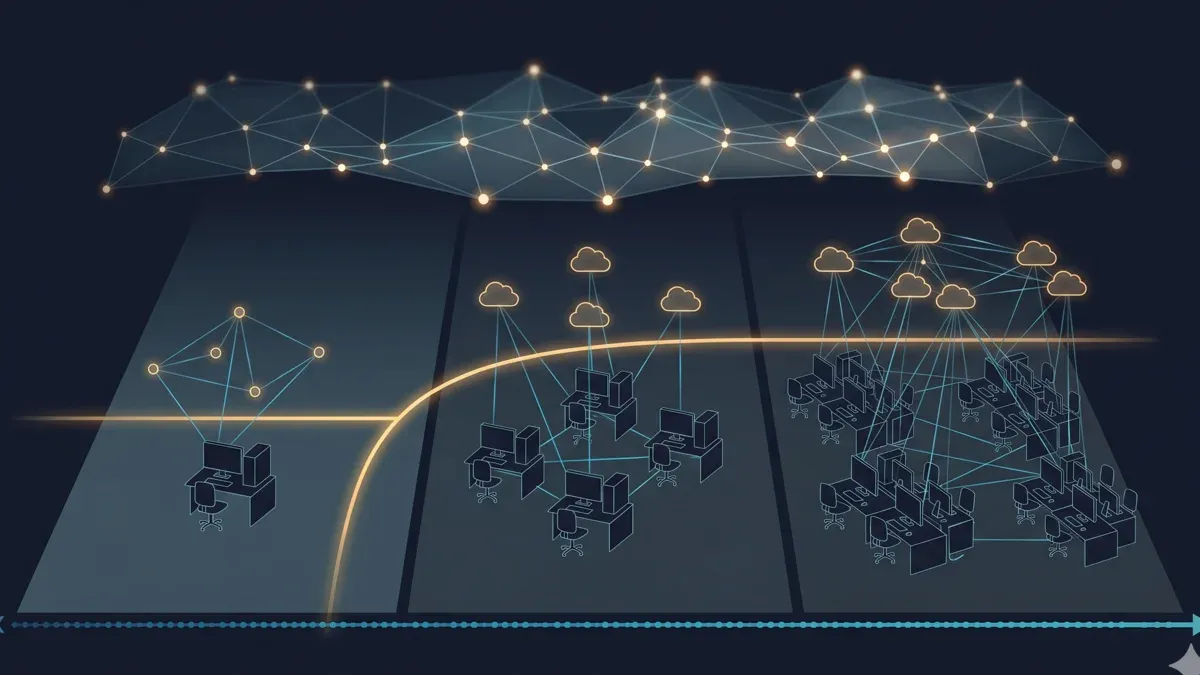

Cloud Rendering Economics for Indie and Mid-Tier Studios

The third quietly transformative shift in 2026 is on the cost side. Cloud rendering in 2024 was framed primarily as overflow capacity for studios with their own render walls. In 2026, it's the default infrastructure for indie teams and a growing share of mid-tier studios — not as a backup plan, but as the core of their pipeline.

Three forces are driving this:

GPU price volatility on the hardware side. The NVIDIA RTX 5090 launched at USD 1,999 MSRP, but actual retail prices in 2026 have ranged USD 2,500–3,800 due to GDDR7 memory shortages and AI-compute demand displacing consumer supply. For a small studio building an eight-GPU render node, that delta multiplied across cards plus motherboard, PSU, cooling, and rack has destabilized the capex case for self-hosted GPU compute.

Cloud GPU price compression on the opex side. Cloud GPU pricing has fallen faster than retail card prices, particularly on second-tier providers. Industry coverage cites Thunder Compute at roughly USD 0.66 per A100 GPU-hour versus USD 4.10 on tier-one clouds. That spread is wider than 18 months ago, narrowing the access gap between large and small studios on real-time iteration and final-frame throughput.

AI denoising compressing required compute volume. This is the most under-appreciated factor. If an archviz job that needed 4,000 samples in 2023 now needs 500 with AI denoising, the cloud bill for that job is roughly 8× smaller for the same quality — which changes the cloud-vs-on-prem breakeven point materially.

The breakeven still exists. Local render walls make economic sense above roughly 400+ rendering hours per month of consistent high volume, where per-hour cost of owned hardware drops below cloud rates. Below that, the cloud is structurally cheaper. We covered the detailed math in our build-versus-cloud total cost analysis and our pricing guide.

What's new in 2026 is the distribution of studios on either side of that curve. The 400-hour line used to separate "freelance/indie" from "small studio." It now increasingly separates "small studio" from "mid-tier" because more small studios have moved to a cloud-first stack. Mid-tier and large studios still build hybrid infrastructure: owned compute for predictable baseline plus cloud for burst.

The operational lever that matters most is whether the chosen cloud is fully managed or Infrastructure-as-a-Service (IaaS). The pipelines look superficially similar but artist-time-per-job differs materially — covered in our fully-managed vs DIY analysis.

On our farm, we operate 20,000+ CPU cores (Dual Intel Xeon E5-2699 V4 nodes with 96–256 GB RAM) and a dedicated GPU fleet of NVIDIA RTX 5090 cards with 32 GB VRAM each, with Cinema 4D + Redshift, V-Ray, Corona, Arnold, Octane, and Cycles pre-installed and licensed at the platform level. As an official Chaos render partner and an official Maxon partner, licensing for V-Ray, Corona, and Redshift is included rather than user-managed. The point isn't the spec sheet — it's that the operational model (no remote desktop, no installer wrangling, no license-server contention) is what determines whether cloud rendering actually saves artist time, regardless of provider.

Cloud rendering decision framework for 2026 — hours per month vs studio size vs managed-vs-IaaS choice

What These Trends Mean for Studios in 2026

Reading the five trends as a single picture rather than five separate stories changes the strategic conclusions.

Pipeline learning has converged. Three years ago a TD planning team training had to pick between deepening Maya/Houdini, learning Unreal Engine, building USD tooling, or investing in AI workflow integration. In 2026, those are no longer distinct paths — a competent pipeline TD needs working fluency in all four, and the same is true for the senior lookdev/lighting artist whose work now spans offline path-traced finals, real-time lookdev, and AI-assisted lookdev passes.

Compute architecture has become more heterogeneous, not less. The 2020 narrative said GPU rendering would replace CPU rendering. The 2026 reality is that CPU still owns roughly 70% of our render-job mix, while GPU grows share for Redshift, Octane, V-Ray GPU, and Gaussian Splatting work. A hardware plan that assumes one or the other will be undersized somewhere.

The offline render farm has shifted from volume to complexity. Studios that match this with infrastructure planning — cloud burst for path-traced finals while running real-time and AI-accelerated iteration in-house — get the benefit of both layers. Studios that try to force every shot through either pure-offline or pure-real-time end up paying for one model and using the other.

Indie and mid-tier studios have caught up on infrastructure access. The barrier to producing photorealistic, complex VFX work in 2026 is increasingly skill and pipeline maturity, not GPU access. Large facilities can no longer rely on raw compute advantage as a moat, and indie studios can punch above their hardware weight class with the operational discipline to match.

Real-time and offline coexist as different tools, not as a successor pattern. Industry discourse often frames real-time as the eventual replacement for offline; five years of evidence suggests that's not the trajectory. Real-time has expanded the work it owns; offline has expanded the complexity it owns; both are growing. Plan for compositional heterogeneity rather than for transition to one stack.

FAQ

Q: What are the biggest VFX industry trends in 2026? A: Five shifts are reshaping the industry: real-time rendering crossing the final-pixel threshold (Unreal Engine 5 and virtual production), AI moving from denoising into pipeline-interior tasks like generative previs and rotoscoping, Gaussian Splatting entering production VFX tools as a first-class asset type, OpenUSD maturing into the industry's interchange standard, and cloud rendering becoming default infrastructure for indie and mid-tier studios rather than overflow capacity for large ones.

Q: How is AI changing the VFX rendering pipeline in 2026? A: AI in 2026 sits inside the production pipeline at multiple stages. AI denoising (OptiX, Intel Open Image Denoise) is standard across V-Ray, Arnold, Redshift, Cycles, and Karma, reducing required sample counts from roughly 2,000–4,000 to 200–500 for comparable quality. Generative AI tools like Stable Diffusion XL and RunwayML are integrated into pre-production lookdev and client comps. Image-to-3D and Gaussian Splatting reconstruction has graduated from research to shipped production tooling. AI-assisted rotoscoping, matchmove cleanup, and simulation previews are quietly compressing the middle of the pipeline.

Q: Is Unreal Engine 5 used for final pixel rendering in 2026? A: Yes — Unreal Engine 5's Movie Render Queue is delivering final-pixel shots for in-camera VFX on LED volumes, mid-budget broadcast and commercial work, and a growing share of episodic and theatrical content. The technique was pioneered by ILM's StageCraft on The Mandalorian in 2019 and has scaled down-tier since. Photorealistic film-finals work that demands path-traced light transport, deep-EXR multi-layer output, and per-shot iteration cycles still runs primarily on offline renderers like V-Ray, Arnold, Redshift, and Karma — real-time and offline are layering onto different pipeline stages, not replacing each other.

Q: What is Gaussian Splatting and why does it matter for VFX in 2026? A: Gaussian Splatting encodes a 3D scene as millions of small anisotropic Gaussians rather than polygonal geometry plus textures, producing photorealistic visual quality at real-time rendering speeds (100+ FPS in Unreal Engine). By early 2026, Nuke 17 ships native splat support, Houdini 21 includes a technical preview, OpenUSD 26.03 added a first-class schema, and V-Ray 7 can ray-trace splat data alongside traditional geometry. Framestore used 4D Gaussian Splatting to deliver approximately 40 final-pixel shots on Superman (2025). On-set capture-to-asset time has compressed from days to under an hour for many environments.

Q: How is OpenUSD changing studio pipelines in 2026? A: OpenUSD (Universal Scene Description), open-sourced by Pixar in 2016, is consolidating into the industry's interchange standard. The Alliance for OpenUSD — Pixar, Adobe, Apple, Autodesk, NVIDIA, formed 2023 — is on track to formalize OpenUSD's foundational specifications by late 2025. Vulkan support was added to the Hydra Storm renderer in OpenUSD 24.08, extending the platform to a broader device range including Android. Asset interchange between Maya, Houdini, Cinema 4D, Blender, and 3ds Max is materially less fragile than relying on FBX, and cross-renderer interoperability via Hydra render delegates is improving year over year.

Q: Should indie and mid-tier studios use cloud rendering in 2026? A: For most workloads under roughly 400 rendering hours per month of consistent volume, cloud rendering is structurally cheaper than building and maintaining a local render wall — and that line keeps moving upward as cloud GPU prices fall and AI denoising compresses required sample counts. The bigger lever for studio economics is whether the chosen cloud is fully managed (no remote desktop, no installer wrangling, licensing handled at the platform level) or Infrastructure-as-a-Service (IaaS). Above 400 hours of consistent monthly volume, hybrid models — owned compute for baseline plus cloud for burst — typically out-perform either extreme.

Q: How did the 2023 Hollywood strikes affect the VFX industry's 2026 outlook? A: The 2023 WGA and SAG-AFTRA strikes reduced upstream production volume that flowed downstream into VFX vendor pipelines through most of 2024 and parts of 2025. VFX Voice characterizes entry to 2026 as balancing uncertainty and opportunity — pipelines are filling again driven by streaming and theatrical projects, but freelance and contract employment remains uneven, particularly in the UK and Vancouver clusters that absorbed the heaviest layoffs. Several mid-tier facilities pivoted into virtual production services or immersive divisions to diversify revenue, structurally changing the buyer composition that render-farm operators serve.

Q: What rendering hardware should studios plan for in 2026? A: Plan for heterogeneity. CPU rendering still owns the majority of production render-job volume — archviz with V-Ray and Corona, animation with Arnold, and pipelines where memory-per-thread matters more than raw FLOPS — while GPU is growing share for Redshift, Octane, V-Ray GPU, and Gaussian Splatting work. NVIDIA RTX 5090 retail pricing has ranged USD 2,500–3,800 in 2026 due to GDDR7 shortages and AI-compute demand, destabilizing the capex case for self-hosted GPU compute for smaller studios. Cloud GPU pricing on second-tier providers has compressed significantly (industry coverage cites roughly USD 0.66 per A100 GPU-hour versus USD 4.10 on tier-one clouds), narrowing the infrastructure access gap between large and small studios.

About Alice Harper

Blender and V-Ray specialist. Passionate about optimizing render workflows, sharing tips, and educating the 3D community to achieve photorealistic results faster.